Table of Contents

You have run the pilots. You have approved the budget. You have sat through the demos. And you are still waiting for AI to show up in your P&L. The problem is not your AI strategy. The problem is what AI has to run on.

Legacy systems AI integration fails at the foundation level — not because AI technology does not work, but because the systems underneath it were never designed to support it. Every failed pilot, every abandoned proof of concept, every “we need more time” update from the project team traces back to the same cause: a stack that cannot give AI what AI needs.

According to Agentic AI Solutions (2026), 78% of organizations say AI readiness is a top priority, yet only 23% have completed a formal AI readiness assessment. That gap — between aspiration and infrastructure — is where your AI investment goes to die.

This article explains why it keeps happening and what the actual path forward looks like.

Your AI Pilots Aren’t Failing — Your Stack Is

Your AI pilots are not failing because of the AI. They are failing because the system the AI has to read, write to, and integrate with was built before AI existed as a production concept — and it shows.

This distinction matters because it changes the solution. If the pilots are failing, you build better pilots. If the stack is failing, you fix the stack. Most mid-market organizations spend two or three pilot cycles learning this the hard way, then arrive at a modernization conversation eighteen months late and significantly over budget.

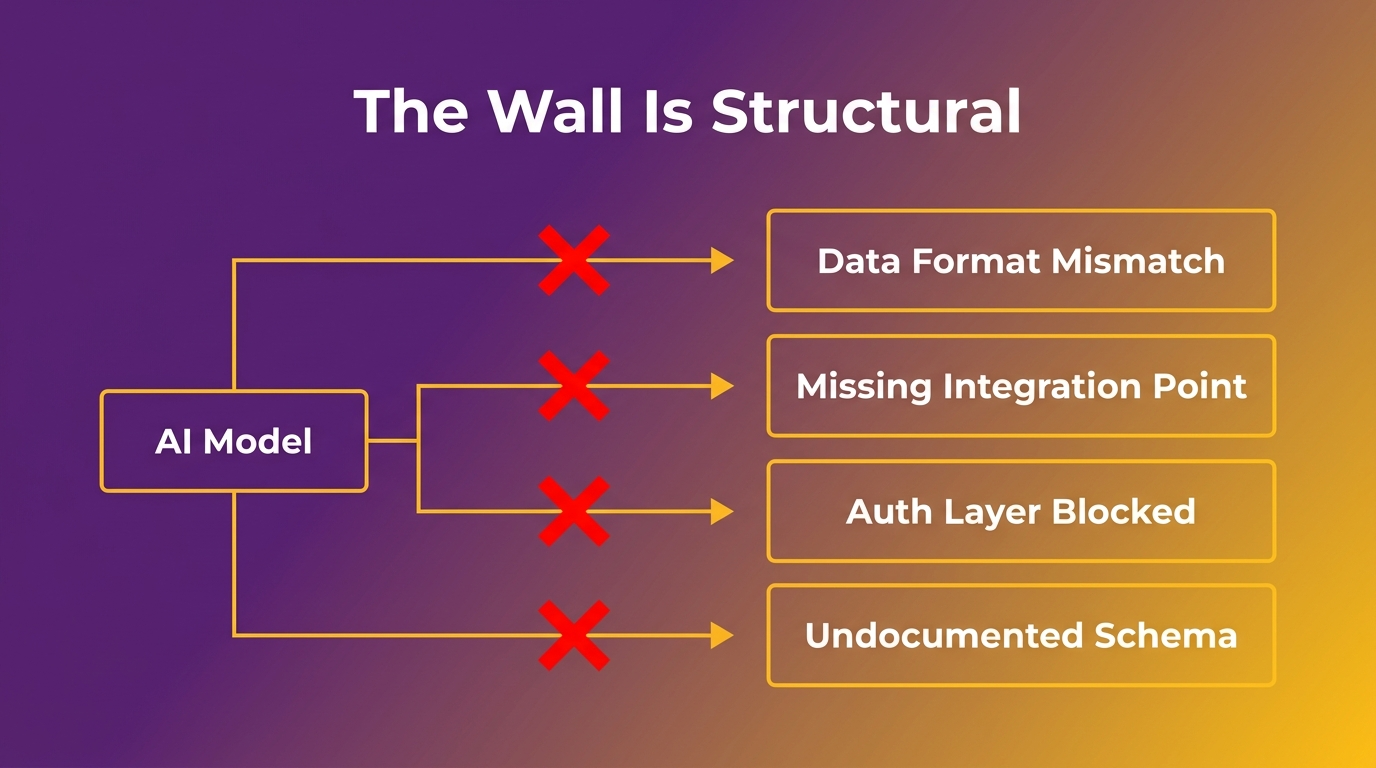

The pattern is consistent. A team identifies a high-value AI use case — automated document processing, intelligent workflow routing, predictive maintenance alerts. They scope a proof of concept, run it in isolation, and it works. Then they try to connect it to the actual operating system, and everything stops. The data is in the wrong format. The integration point does not exist. The authentication layer blocks the API call. The database schema has not been documented since the original developer left. The “quick fix” to get around it takes three months.

“When legacy systems limit access to reliable data, slow down integration across workflows, or make change deployment complex and time-consuming, AI initiatives stop being strategic levers and become isolated experiments,” according to Cesar DOnofrio, CEO and co-founder of Making Sense. “Organizations may be able to run pilots, but they cannot operationalize or scale them.”

That is the wall. And the wall is structural.

The Legacy Tax: What That System Is Actually Costing You Right Now

Before you can solve the AI readiness problem, you need to see the full cost of what you are already paying. The legacy tax is not a line item — it is the cumulative drag across maintenance spend, lost velocity, and foreclosed opportunity.

The maintenance budget that crowds out innovation spend

Most mid-market organizations spend 60–80% of their technology budget keeping existing systems running. That figure is not a generalization — it is the operating reality for companies running systems built five, ten, or fifteen years ago that have accumulated patches, workarounds, and undocumented dependencies at every layer.

According to McKinsey’s analysis of 500 engineering teams (2025), teams carrying high technical debt took 40% longer to ship features compared to low-debt teams. That is not a technical statistic. That is a competitive one — it means every capability your business needs takes 40% longer to reach your customers than it should.

The maintenance budget is also a ceiling. When 70–80 cents of every technology dollar goes to keeping existing systems alive, you have almost nothing left for the capabilities that would change your competitive position. You approve the AI initiative and then watch it consume the same budget that was supposed to fund growth.

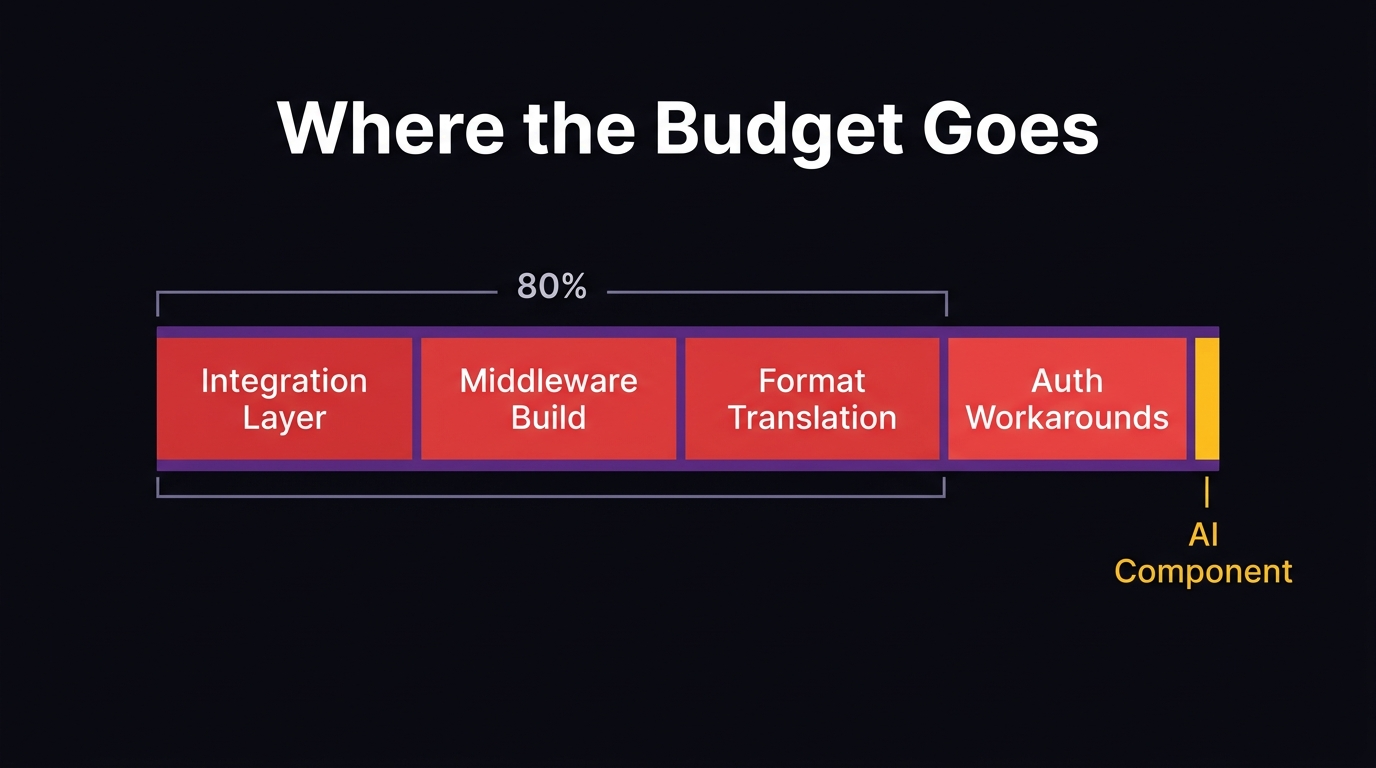

“We see the ROI floor drop out when organizations spend 80% of their budget on bespoke middleware just to get fragmented systems to talk to each other,” said Cesar DOnofrio of Making Sense. “At that point, you aren’t investing in intelligence. You are paying a legacy tax to keep the lights on.”

The compound cost: technical debt + lost AI opportunity

According to Making Sense (2026), citing ITpro research, enterprises lose approximately $370 million annually due to outdated technology and technical debt. That number is striking in isolation, but it understates the real cost for mid-market organizations because it does not include the opportunity cost of every AI initiative that stalls, scales back, or gets canceled entirely.

Technical debt and AI opportunity cost compound each other. The more debt you carry, the harder AI integration becomes. The harder AI integration becomes, the longer competitors who have already modernized extend their lead. Every quarter you delay is not a neutral pause — it is compounding disadvantage.

Why Every AI Pilot Hits the Same Wall

AI pilots consistently fail to scale because they hit two specific infrastructure barriers: data that exists but cannot be accessed, and integration costs that consume the project budget before the AI component can function.

Data you own but cannot use

Legacy systems were built to store and process data inside a single system, not to share it. The data architecture that made sense in 2010 — when your systems did not need to communicate with anything outside themselves — is the same architecture that blocks every AI model in 2026.

AI models need clean, accessible, consistently structured data. What legacy systems typically provide is the opposite: data locked in proprietary formats, split across siloed databases that do not talk to each other, missing the metadata that would make it useful, and governed by access layers that predate modern API standards.

According to IT Brief (2026), 44% of organizations invest in custom software primarily to improve integration, while 40% name integration as their biggest challenge. Those two numbers describe the same problem from opposite directions: everyone knows the data needs to connect, and almost no one has solved it yet.

As Jesper van den Bogaard, CEO of Factor Blue, describes it: “Data silos are not simply a technical problem; they are also an organizational one. Organizations aren’t aware of the huge impact data silos can have within their organization, so they do not invest enough time and resources in tackling or preventing this issue.”

The integration layer that consumes your AI budget before launch

The Futurum Group’s global survey found that 35% of organizations identified legacy system integration as the single highest-cited barrier to AI adoption — above cost, above skills gaps, above regulatory concerns.

The mechanism is straightforward. Before an AI model can process a single transaction, your team has to build the integration layer that connects it to your existing data. In a modern stack, this is a standard API call. In a legacy environment, it is often months of custom middleware development, format translation, authentication workarounds, and testing — all of it burning budget that was earmarked for the actual AI initiative.

By the time the integration is functional, the project has consumed most of its runway. The AI component gets scoped down or shelved. The team reports that the “pilot worked” — because the technical proof of concept did work — but it never makes it into production. The next budget cycle, the same conversation starts again.

The Pilot-to-Production Gap: Where Mid-Market AI Actually Dies

The pilot-to-production gap is the specific failure mode that most modernization content ignores. It is not a resourcing problem, and it is not a skills problem. It is a structural consequence of trying to operationalize AI on infrastructure that was not designed for it.

According to S&P Global Market Intelligence, 46% of AI projects are abandoned between proof of concept and broad adoption — a figure that surged from 17% to 42% in a single year. That trajectory does not describe organizations losing interest in AI. It describes organizations repeatedly running into the same infrastructure ceiling and running out of runway before they can clear it.

The pilot works because it runs in isolation. A sandbox environment, a subset of clean data, a controlled integration point. None of those conditions exists in production. When the project moves from the sandbox to the actual operational environment, the gap between “this worked in the demo” and “this works in your systems” becomes the gap between a successful pilot and a canceled project.

According to CBIZ’s Q1 2026 Mid-Market Pulse Report of more than 1,300 business leaders, 84% of mid-market businesses are prioritizing cost optimization and productivity, while 41% report concerns about technology and AI modernization. Those 41% have not failed at AI strategy. They have collided with legacy infrastructure and are trying to figure out what to do next.

The pilot-to-production gap is structural. You cannot sprint, resourcefully, or budget your way past it. You can only fix the foundation it runs on.

Why Layering AI on Top Makes the Problem Worse

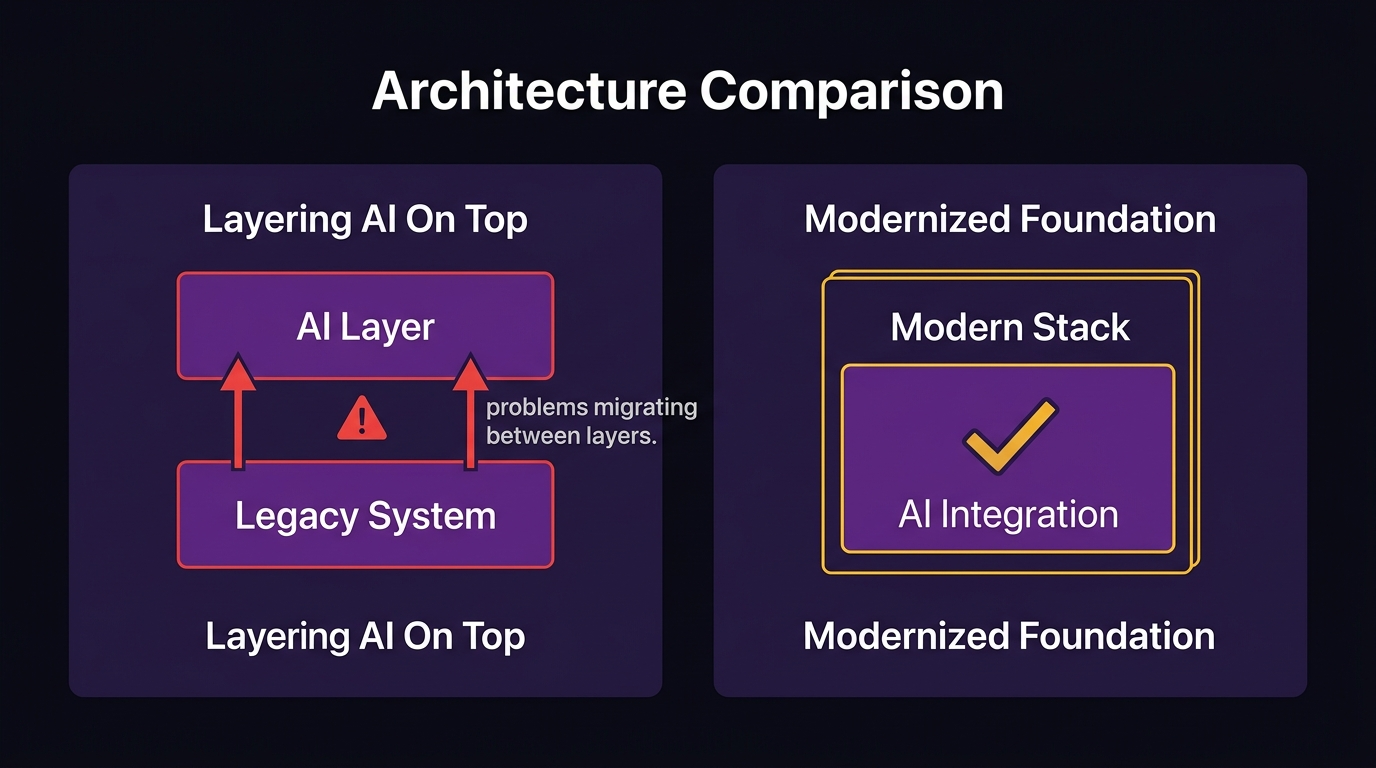

After a failed pilot, the intuitive response is to find a different way in. Add a layer on top of the existing system. Buy a point solution that handles the AI component without touching the legacy stack. Use a wrapper API that abstracts the integration problem away.

This approach is understandable. It is also the reason most mid-market organizations end up with two broken systems instead of one.

When you add a layer on top of a legacy foundation, the legacy foundation’s problems do not disappear — they migrate upward. The data quality issues that blocked your first pilot now block the AI layer you added to get around the first pilot. The integration bottlenecks that consumed your original project budget now also apply to the new layer you built on top. You have doubled the surface area of the problem while solving none of its root causes.

There is also a compounding ownership problem. Every layer you add without modernizing the foundation increases the complexity of the total system. More complexity means more dependencies. More dependencies mean more key-person risk, more integration costs, more maintenance overhead, and more barriers to the next capability you want to add.

“Legacy systems have become so complex that companies are increasingly turning to third-party vendors and consultants for help,” said Ashwin Ballal, CIO of Freshworks. “But the problem is that, more often than not, organizations are trading one subpar legacy system for another. Adding vendors and consultants often compounds the problem, bringing in new layers of complexity rather than resolving the old ones.”

The workaround is not a path forward. It is a longer route to the same wall.

AI-Augmented Modernization: The Path Through the Wall, Not Around It

The path through the wall is modernizing the foundation the AI will run on — and using AI itself to do it faster and at lower cost than traditional modernization approaches have required.

AI-augmented modernization does not mean adding AI features to your legacy system. It means using AI across every phase of the software development lifecycle to rebuild the foundation: requirements analysis, architecture design, implementation, testing, and documentation. AI handles the repetitive, time-consuming work at each phase so the engineering team can move faster and produce cleaner results than traditional development timelines allow.

Using AI across the entire SDLC to modernize the foundation

According to McKinsey, generative AI can deliver 40–50% acceleration in tech modernization timelines and a 40% reduction in costs from technical debt. Those numbers change the calculus on modernization ROI significantly. A project that previously required 24 months can reach delivery in 12–14. A budget that previously required board-level approval becomes a manageable capital allocation.

According to McKinsey, cited by Ciklum (2026), AI can improve developer productivity by up to 45%. When that productivity gain applies specifically to modernization work — migrating legacy data structures, rewriting undocumented business logic, building integration layers, generating test coverage — the compound effect on timeline and cost is substantial.

The specific mechanism: AI-assisted requirements analysis surfaces design risks earlier. AI-accelerated sprint planning reduces planning overhead. AI-generated test coverage means production-ready code reaches deployment with far fewer defect cycles. AI-produced documentation means the knowledge embedded in every engineering decision does not disappear when the engagement ends.

What you get at the end that you didn’t have before

The deliverable is not “a modernized system.” The deliverable is a system that can accept AI integration — with clean data architecture, documented APIs, modern authentication standards, and the integration layer already in place.

When the modernization is complete, the AI pilots you ran before will work. Not because the AI is different, but because the foundation it needs now exists. The data is accessible. The integration points are documented. The architecture supports the connections your AI tools require.

That is the distinction between AI readiness as an aspiration and AI readiness as an infrastructure state. One is a strategy. The other is a system.

Complete Ownership: Why Documentation Transfer Is the Difference Between Modernization and a New Black Box

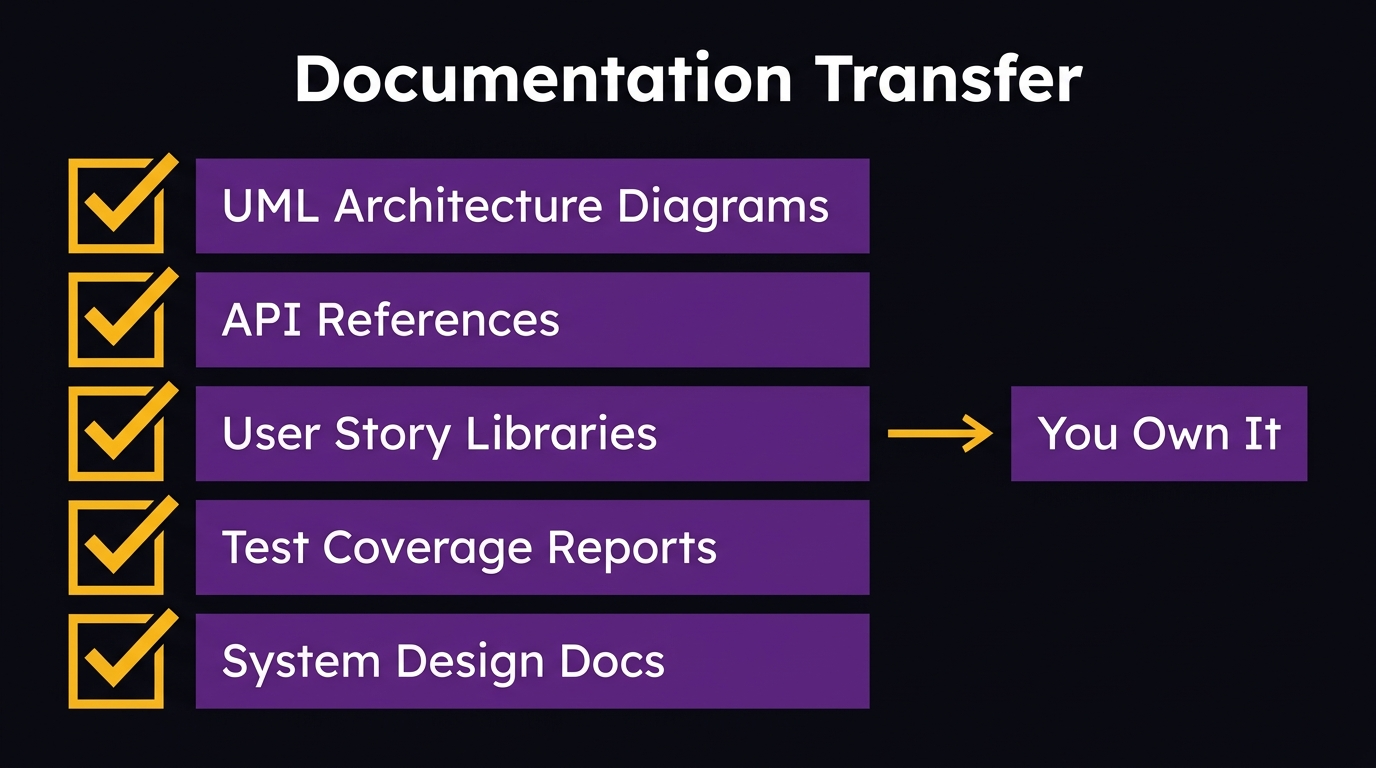

Every mid-market CEO who has been through a major system implementation knows the feeling: you paid for a new system, but you don’t actually own it. The vendor holds the source code logic. The integration documentation lives in their heads. You need their team to change anything. You traded one black box for another.

This is the risk that most modernization conversations never surface — and it is the risk that turns a good modernization project into a new dependency problem. You fix the legacy stack, but you end up equally locked into the firm that did the fixing.

The antidote is documentation transfer — not as a courtesy at project close, but as a contractual standard deliverable on every engagement. UML architecture diagrams. System design documents. API references. User story libraries. Test coverage reports. Every decision the engineering team made, documented and transferred unconditionally to you at the end of the engagement.

Documentation transfer means you can hand the system to your internal team. It means a new vendor can pick it up without starting from scratch. It means the organizational knowledge is in documents, not in someone’s head. It means when the engagement ends, you own the system — actually own it, in the same way you own any other business asset.

“Want control? Own the repo, app store, and cloud. Day 1. If they say ‘we’ll transfer at the end’, run,” warned one founder advising others on outsourcing risks in a widely cited Reddit thread on software ownership.

When evaluating any modernization partner, documentation transfer is not a negotiating point — it is a minimum standard. If it is not unconditional and complete, you are not modernizing your system. You are refinancing your dependency.

What to Ask Before You Hire a Modernization Partner

Most modernization vendor conversations are structured around what the vendor can build. The more important question is what you will own when they are done. These questions give you a CEO-level filter before you go deeper into technical evaluation.

On AI-augmented delivery:

– Does your team use AI across the entire development lifecycle, or only in isolated phases? Ask for specifics — requirements, sprint planning, implementation, testing, and documentation are each distinct.

– How does AI-augmented delivery reduce timeline and cost compared to traditional approaches? Ask for examples from comparable mid-market engagements.

On the foundation you will inherit:

– When the engagement ends, will my stack be able to accept AI integration without additional middleware? What specifically makes it AI-ready?

– What does the data architecture look like after modernization? Can you show me how integration points are documented?

On ownership and documentation:

– What documentation do you transfer at project close? Is it unconditional — meaning it transfers regardless of whether we continue the engagement?

– If I need to hand this system to a new vendor in three years, what would they receive from you to get up to speed?

On dependency risk:

– After delivery, can my internal team or another vendor maintain and evolve this system without your involvement if we choose?

– What would a clean handover look like, and have you executed one before?

On accountability:

– Do you offer SLA-based ongoing support after delivery, and does that support cover systems you built as well as systems built by other vendors?

– Can I speak with a client who is three or more years into their engagement with you?

The answers to these questions tell you whether you are buying a modernized system or buying a new dependency dressed in modern clothing.

Frequently Asked Questions

Why do mid-market AI pilots fail to scale beyond proof of concept?

Mid-market AI pilots fail to scale because the proof of concept runs in a controlled environment with clean data and isolated integration points. When the project moves to production, it collides with legacy data silos, undocumented APIs, and integration layers that do not exist. According to S&P Global Market Intelligence, 46% of AI projects are abandoned between pilot and production. The cause is structural, not a resourcing or skills gap.

How much does legacy system modernization cost for a mid-market company?

Modernization costs vary by system complexity, age, and scope, but AI-augmented approaches have meaningfully changed the range. According to McKinsey, generative AI delivers 40–50% acceleration in modernization timelines and 40% reduction in costs from technical debt. A project that previously required $500K–$2M and 18–24 months can now be scoped significantly lower. A software architecture assessment is the right first step to get an accurate estimate for your specific system.

How long does legacy system modernization take?

Traditional modernization projects run 12–36 months for mid-market systems. AI-augmented modernization compresses that range substantially. McKinsey’s research indicates 40–50% timeline acceleration through generative AI applied across the SDLC. The actual timeline depends on system complexity, integration requirements, and whether the modernization is phased or comprehensive. A phased approach — starting with the highest-priority integration bottlenecks — can deliver AI-ready

What is the fastest path to AI readiness for mid-market organizations?

The fastest path is not another pilot — it is a targeted modernization of the specific infrastructure blocking your highest-value AI use case. Identify the integration bottleneck that killed your last pilot, scope the minimum foundation work required to remove it, and execute that modernization with AI-augmented tooling to compress the timeline. This is faster than a full platform replacement and produces a working AI-ready system, not a proof of concept.

How can companies modernize legacy systems without replacing everything?

Phased modernization addresses the highest-impact areas first — typically data architecture, integration layers, and API documentation — without requiring a full platform replacement. The goal is to make the existing system AI-compatible, not to rebuild it from scratch. This approach avoids the 24–36 month timeline of a full rewrite and the operational risk of migrating live systems all at once. AI-augmented development compresses each phase further.

What is the ROI of legacy modernization for mid-market firms?

The ROI calculation has two components. The direct cost of inaction: according to Making Sense (2026), citing ITpro research, enterprises lose approximately $370 million annually due to technical debt and outdated technology. The cost of delay compounds because AI-enabled competitors extend their advantage each quarter you wait. The positive ROI case includes the 40% feature velocity gain from eliminating high technical debt, the AI productivity gains (up to 45% per McKinsey), and the competitive capability that becomes available once the foundation is in place.