by Sarah Mitchell | Jun 9, 2026 | AI & Innovation Hub

Agentic AI Governance: The Ops Team’s Blindspot

Your operations team didn’t ask permission. They rarely do. Someone needed to automate a contract review, so they built a quick agent in Zapier AI or Make. Another person wired up a notification agent to flag overdue invoices. A third connected your CRM to an AI workflow that reroutes support tickets without any human in the loop. None of it went through IT. None of it is documented. And all of it is now load-bearing infrastructure.

This is the agentic AI governance problem, and it’s not a future risk. It’s already running in your business.

The question isn’t whether AI agents will get into your operations. They already have. The real question is whether the systems they’re wired to were built to be transparent and auditable, or built fast and then forgotten.

The Agents Are Already Inside Your Operations

AI agents entered ops teams the same way spreadsheets did in the 1990s: one department at a time, without a formal approval process, because they solved an immediate problem faster than any official pathway could.

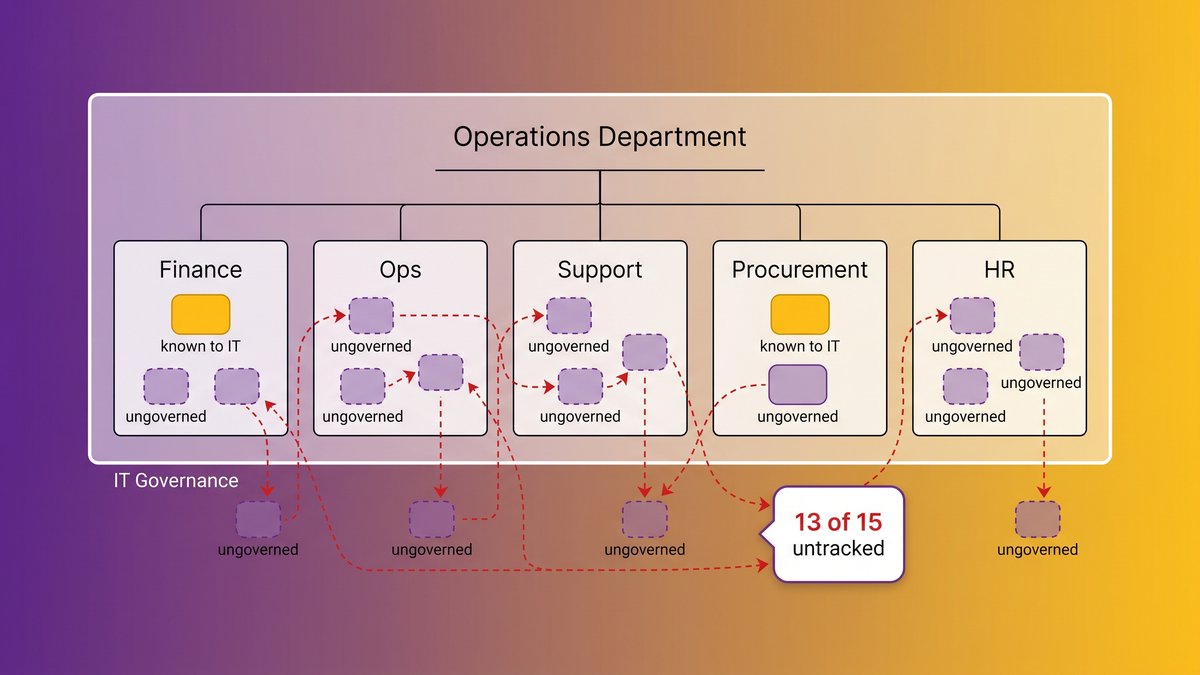

A mid-sized logistics company we worked with had seventeen active AI automations running across their operations function. Their IT team knew about two. The other fifteen had been built by operations coordinators, finance analysts, and one very productive project manager who learned to use an AI workflow builder on a weekend. Some of them touched sensitive vendor contracts. One of them sent automated payment reminders to clients without a human review step.

An operations team with agents running across multiple functions, most invisible to the IT governance layer.

This isn’t a story about rogue employees. It’s a story about how agentic AI tools are designed: low barrier to entry, immediate value, no friction, no documentation requirement. The people building these agents aren’t acting maliciously. They’re solving real problems. But the result is a governance gap that’s compounding by the week.

Shadow AI is what analysts call it when employees use AI tools without IT approval or oversight. CIO.com reports the pattern has now evolved past individual tool usage into what they’re calling “shadow operations”: entire automated workflows running outside any sanctioned governance layer.

The scale is harder to ignore than it used to be. Gartner published data this week showing that by 2028, the average Fortune 500 enterprise will have more than 150,000 AI agents in use, up from fewer than 15 in 2025. The gap between “agents in production” and “agents under governance” is not closing. It’s accelerating.

Why This Is an Operational Continuity Problem, Not Just a Security Problem

Security teams talk about shadow AI as a data exposure risk. That’s real, but it’s not the frame that keeps COOs up at night.

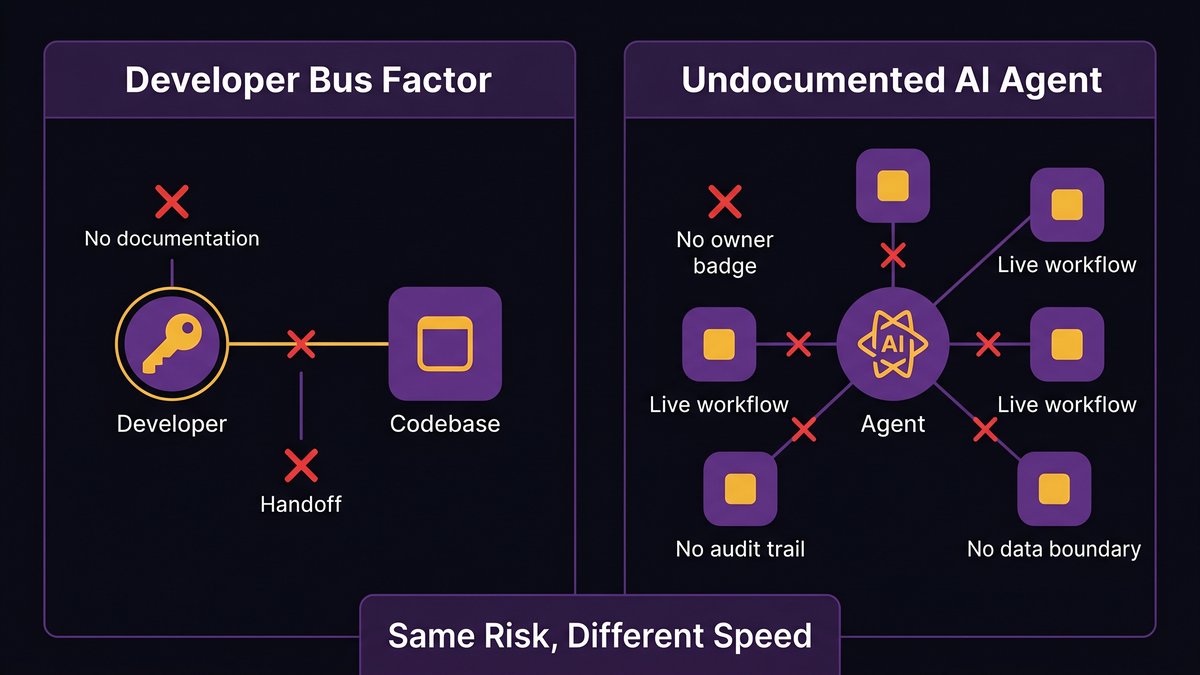

The operational continuity problem is this: when an undocumented agent fails, breaks, or behaves unexpectedly, nobody knows what it does well enough to fix it. And if the person who built it leaves, the organization is in exactly the same position as when a key developer walks out the door holding all the institutional knowledge of a system in their head.

You’ve seen that film before. The developer who built the custom billing system on a Friday afternoon five years ago and documented nothing. The one retirement that triggered a six-month scramble to reverse-engineer a codebase nobody else understood. The consultant who vanished with the architecture in their head.

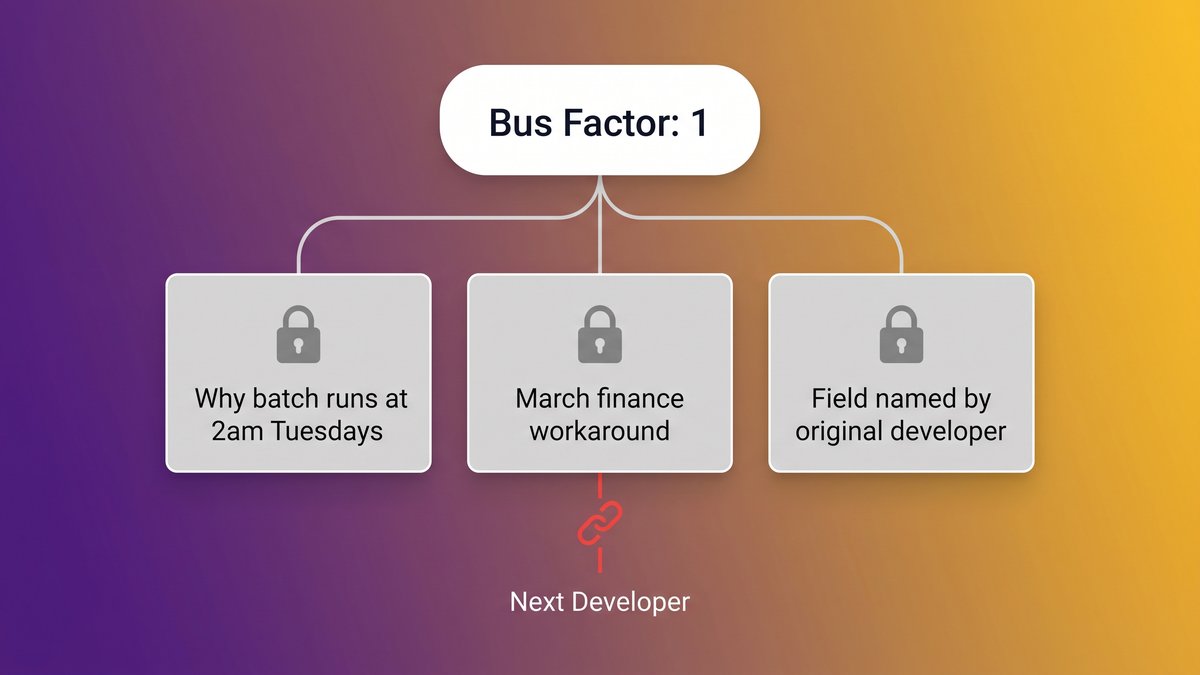

The bus-factor problem isn’t limited to human developers. An agent with no owner and no documentation creates the same single point of failure.

Agentic AI produces the same exposure, but faster and at wider scale. One developer leaving creates a bus factor crisis. A team of five operations staff, each building and maintaining their own agents, creates five of them simultaneously. All invisible to leadership. All is quietly critical to the workflows they’ve been threaded through.

Deloitte’s 2026 Tech Trends research shows that 35% of organizations still have no formal agentic AI strategy at all. That figure is not a measure of companies that haven’t adopted AI agents. It’s a measure of companies where agents are running, and nobody is in charge of them.

That’s an operational continuity problem. It’s the same class of risk as deferred infrastructure maintenance: invisible until something fails, catastrophic when it does.

What Ungoverned Agent Sprawl Actually Looks Like in Practice

Agent sprawl is the uncontrolled proliferation of AI agents across an organization without centralized tracking, inventory, or governance. It doesn’t announce itself. It accumulates.

Here’s what it tends to look like at the 18-month mark in a mid-market B2B company:

Duplicate agents are doing the same job. Three different people built three different agents to handle variations of the same customer onboarding step. None of them knows the others exist. Two of them send emails to the same clients, sometimes on the same day.

Agents running on tools the company no longer officially supports. The workflow was built on a platform that got acquired, repriced, or deprecated. The agent still runs because nobody noticed, until the API breaks.

No ownership when something goes wrong. A payment reminder agent sends the wrong amount to a client. The operations team opens a ticket. IT says they didn’t build it. The person who built it left six months ago. The agent runs on a personal API key that’s now orphaned. Nobody can stop it without also breaking three other processes that depended on the same key.

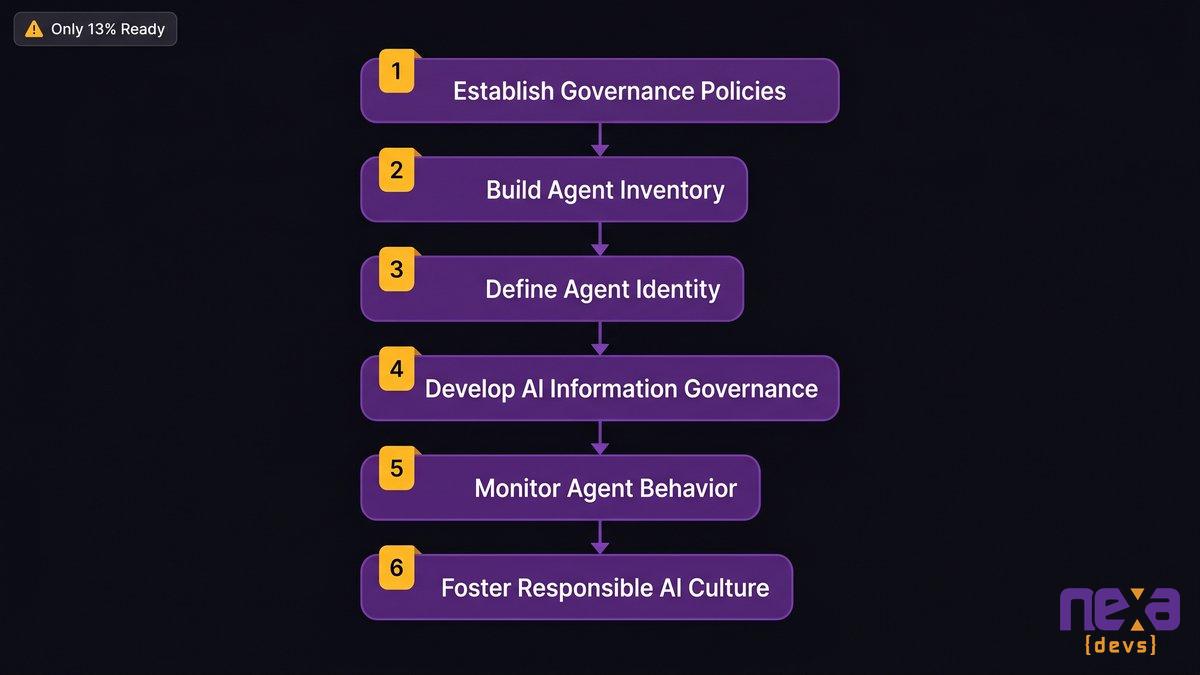

Gartner’s new data is blunt about this: only 13% of organizations believe they have the right AI agent governance in place. That number, published today in a press release identifying six steps to manage AI agent sprawl, reflects what most operations leaders already feel when they try to answer basic questions like “how many agents are we running right now?”

Gartner’s six-step framework for managing AI agent sprawl was released on April 28, 2026.

The governance problem compounds with scale. A single undocumented agent is a nuisance. Fifty undocumented agents, spread across five departments, each touching different data sources and triggering different downstream actions, is a liability.

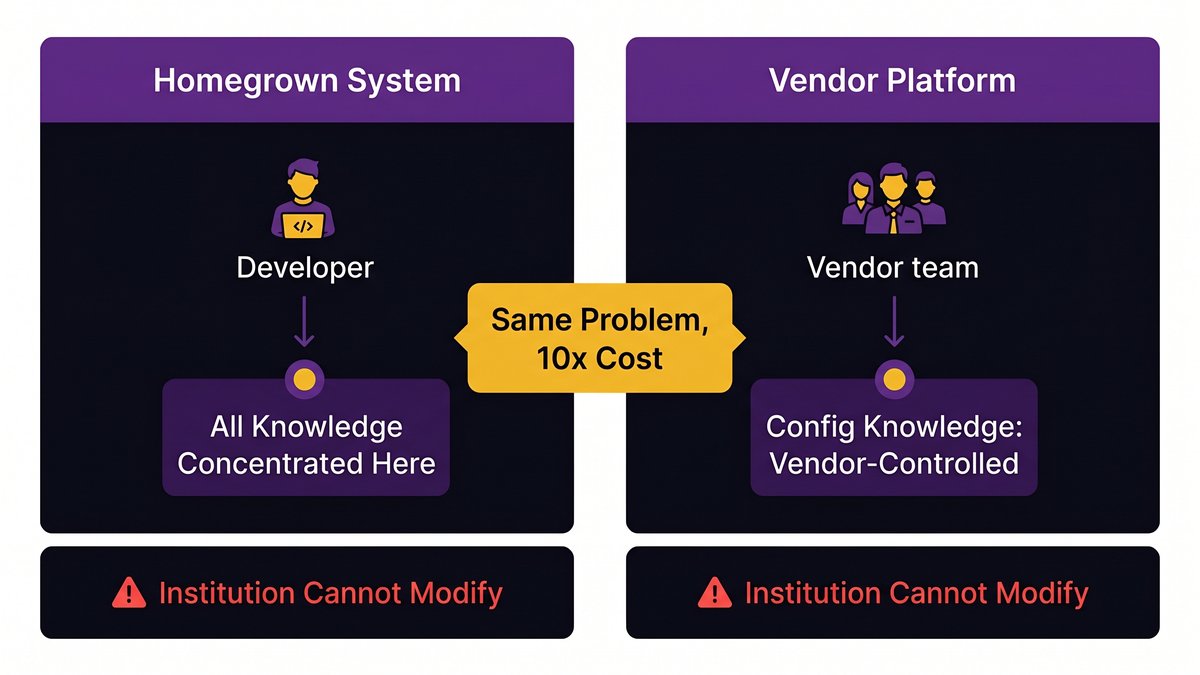

Why Existing Governance Frameworks Weren’t Designed for Operations-Led AI

Most organizations already have an AI governance policy. IT or Legal wrote it. It covers the approved procurement of tools and data handling. And it has zero operational teeth when the agents in question were never procured through any formal process.

IT-centric governance frameworks work well for controlling what the technology function purchases and deploys. They don’t work for operations-led AI because the building happens entirely outside IT. No procurement request, no vendor review, no security assessment. Someone opens a free-tier account on a no-code automation platform, connects their work email, and starts building.

The gap isn’t in the policy language. It’s in the actor. IT governance assumes IT builds the systems. When operations staff build agents directly, which is increasingly the default and not the exception, IT governance can’t see the activity until it’s already embedded in live workflows.

Okta’s research on agentic AI governance makes this structural problem explicit: existing governance frameworks fall short because they weren’t designed to account for “exponential complexity and attack surfaces” created by agents that act autonomously across multiple integrated systems. The accountability and attribution challenges become severe when you can’t answer who owns the agent, who approved its access, or what data it’s touched.

This isn’t an argument for stripping operations teams of their autonomy. They built these agents because they work. It’s an argument for recognizing that the governance model that made sense for software procurement doesn’t map cleanly to a world where your finance analyst can wire up an autonomous agent before lunch without writing a single line of code.

What a Governable Agent System Actually Requires

Governing agentic AI in an operations environment requires three things. They’re not complicated. They are consistently missing.

1. Agent identity: Every agent has a named owner and a defined scope.

Every agent needs a responsible person: not a team, not a department, but a specific individual who is accountable for what it does. That person knows what data the agent accesses, what it triggers, what systems it connects to, and what happens if it fails. The agent’s scope is documented in terms a non-technical stakeholder can read and verify.

Without this, “Who owns that agent?” has no answer. And when something goes wrong at 11 pm on a Friday, the absence of an answer is the crisis.

2. Audit trail: Every decision the agent makes is logged and retrievable.

When your agentic workflow system makes a decision, that decision needs a record. Routes a ticket, sends a payment, approves a discount: all of it logged. Who triggered it, what data it processed, what action it took, and when. Not just for security reasons: for operational accountability. If a client claims they were billed incorrectly and an automated agent handled the billing run, you need to be able to reconstruct exactly what happened.

3. Defined data boundaries: what the agent can touch, and what it can’t.

The agent that handles invoice reminders doesn’t need access to HR records. The agent that routes support tickets doesn’t need access to financial forecasts. Least-privilege access isn’t just a security principle. It’s an operational one. Agents with unnecessarily broad permissions create exposure that grows invisibly as the agent evolves.

The three requirements for a governable agent system are identity, auditability, and defined access scope.

These three requirements aren’t technically demanding. They’re architecturally demanding. A system built quickly by a non-technical operator on a free-tier workflow platform almost certainly doesn’t have them. A system built by a development team with governance as a design constraint will.

For each agentic use case in an organization’s AI portfolio, tech leaders should identify and assess the corresponding organizational risks, and, if needed, update their risk assessment methodology.

mckinsey.com

The Difference Between Built Fast and Forgotten and Documented Architecture

Most operations-led AI agents share the same birth story: someone with a real problem, a low-code platform, an afternoon to spare, and no time for documentation. The agent works. It gets used. Other workflows start depending on it. The documentation never happens, because a working system always feels less urgent to document than whatever problem is next in the queue.

This is the “built fast and forgotten” pattern. The agent exists. It runs. Nobody except the original builder understands it, and sometimes not even them, six months later.

The alternative isn’t slower. It’s structured.

When a development team builds an internal agentic system with governance as a design constraint, the output looks different. An architecture document exists from day one. The data flow diagram shows what the agent touches and what it doesn’t. API integrations are scoped to what the agent actually needs. A handoff document means whoever inherits the system can understand it without reverse-engineering it from scratch.

This is what Nexa Devs builds when organizations come to us after discovering their operations are running on a layer of undocumented AI automations that nobody fully controls. Not a governance policy. A governable system, one where the operational map exists from day one.

The distinction matters because retrofitting governance onto undocumented agents is significantly harder than building governable agents in the first place. You can’t audit what was never logged. You can’t set access boundaries on integrations that were never scoped. The documentation debt compounds the same way technical debt does: invisibly, until it’s expensive.

Getting the Operational Map You’re Currently Missing

If your organization is in the majority (Deloitte found that only 11% of organizations are actively using agentic AI systems in production with any formal strategy), the starting point is an inventory.

Conducting a shadow agent audit:

Start with the question: what automated workflows are running right now that IT didn’t build? Ask operations managers, not IT. The IT team knows what they own. Operations teams know what they built.

A practical audit runs through three inventories: platforms (which no-code and AI automation tools are connected to company data?), integrations (which company systems have active API connections to third-party tools?), and outputs (which automated emails, notifications, or data writes are firing without a human trigger?).

That audit will surface agents that nobody in the governance chain knew existed. Some of them will be genuinely load-bearing. Some will be dormant. A few will be actively creating compliance exposure.

Before any new agent goes into production:

Require three things before an agent goes live: a named owner, a plain-language description of what the agent does and what data it accesses, and a test scenario that documents expected versus actual behavior. This doesn’t require a formal approval board. It requires a one-page record that lives somewhere retrievable.

The organizations that will handle the transition to agentic operations cleanly aren’t the ones that blocked agents. They’re the ones that built systems where agents are visible, owned, and auditable. That starts with knowing what’s already running.

If you’re ready to replace your layer of undocumented automations with a purpose-built, governable internal system, contact Nexa Devs to discuss a shadow agent audit and custom build assessment.

FAQ

What is agentic AI governance?

Agentic AI governance is the structured management of autonomous AI agents that act on behalf of an organization. It defines who owns each agent, what data it can access, what actions it can take, and how its decisions are logged. Without governance, agents multiply and create accountability gaps that are difficult to reverse.

Why is agentic AI governance an operations problem, not just an IT problem?

Operations teams are now building AI agents directly, without IT involvement, using no-code workflow platforms. IT governance frameworks don’t see these agents because they were never procured through official channels. The governance gap lives where agents are built, in operations, and not where IT can easily monitor them.

What is AI agent sprawl?

AI agent sprawl is the uncontrolled proliferation of AI agents across an organization without centralized inventory, ownership, or oversight. Gartner projects Fortune 500 companies will operate over 150,000 agents by 2028, up from fewer than 15 in 2025.

How do you govern AI agents that are already running in production?

Start with an inventory by asking operations managers what automated workflows they’ve built. Then require three things for each agent: a named owner, a description of what data it touches, and a log of its decisions. For undocumented agents, the options are to document retroactively, replace with a governable system, or retire.

What’s the difference between shadow AI and agent sprawl?

Shadow AI is any unsanctioned use of an AI tool. Agent sprawl is more specific: it’s the uncontrolled accumulation of autonomous AI agents wired into live operational workflows. Agent sprawl is shadow AI that has become load-bearing infrastructure.

by Sarah Mitchell | Jun 4, 2026 | AI & Innovation Hub

AI Layoffs and Institutional Knowledge: The Cost Nobody Warned You About

The call comes six weeks after the layoff is final. Your operations director finds you before the Monday standup. Three words land: “Nobody knows how.”

The developer you cut was the only person who understood the custom integration your order management system runs on. Not the only person who wrote it. The only one who knew why a specific database trigger fires at 3 am, why staging behaves differently than production, and what happens if you change the API endpoint it depends on. You didn’t know any of that when you approved the layoff. Neither did HR.

1. AI layoffs don’t just cut headcount. They destroy system knowledge that lives exclusively in the departed developer’s head.

2. Forrester Research found 55% of companies already regret AI-driven layoffs, and half will quietly rehire at higher cost.

3. When a developer who built your internal system leaves, that system becomes unmaintainable. This is true regardless of whether any code was removed.

4. Bus factor measures how many people must leave before a system breaks. For most mid-market companies, it’s one.

5. The structural fix isn’t documentation software. It’s an embedded team model that builds knowledge continuity into every delivery.

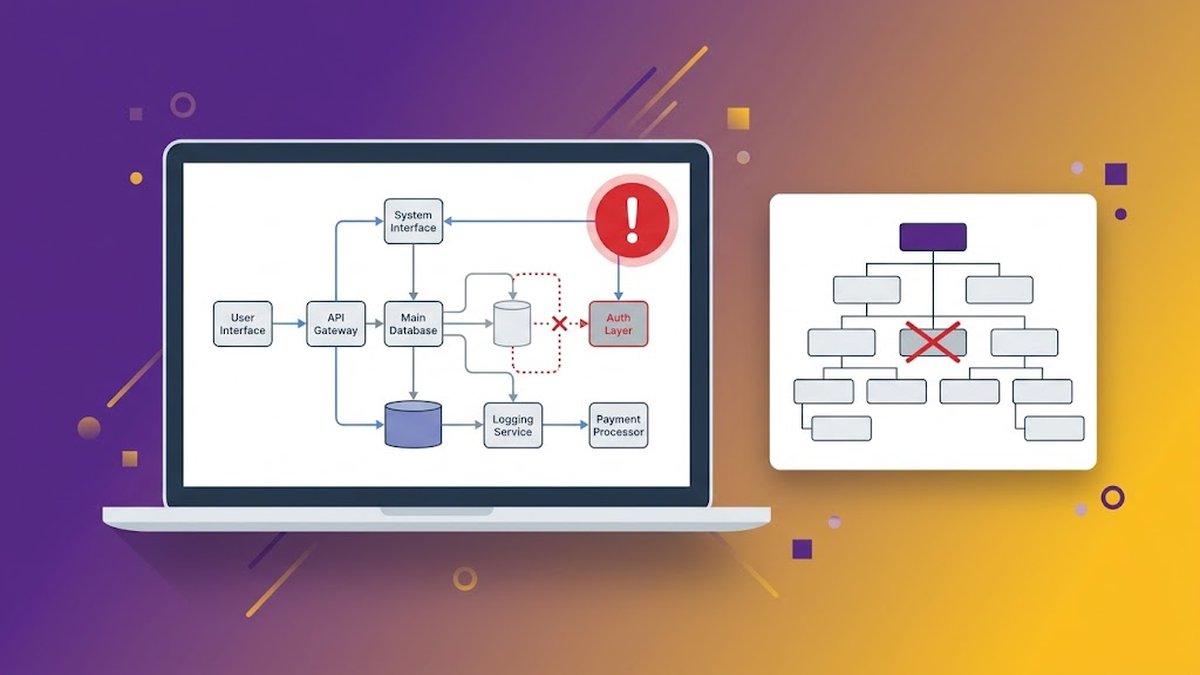

A scene that plays out in mid-market companies after AI-driven layoffs: critical internal systems become unmaintainable when the developer who built them is gone.

You Approved the Layoff. Then the System Broke.

It doesn’t announce with sirens. Six months later, someone investigates a report that shows incorrect numbers and types in the team Slack: “We can’t find anyone who knows how this works.”

It’s not a dramatic failure. No alarms fire. The system doesn’t collapse in a cloud of error messages. What happens is quieter: a feature stops working, a report shows numbers that look slightly wrong, an integration starts behaving inconsistently. When your team investigates, they find code nobody can read, architecture nobody can explain, and decisions nobody remembers making.

This is the AI layoffs institutional knowledge crisis in mid-market software systems. It doesn’t announce itself. It accumulates.

Mid-market companies (those in the 50-to-500-employee range) have a specific vulnerability that enterprise organizations don’t. At enterprise scale, redundancy exists almost by accident: multiple developers work on the same systems, documentation practices get enforced through process and compliance, and knowledge gets distributed across teams. At mid-market scale, you often had one developer, maybe two, who understood your custom reporting pipeline, your internal CRM integration, your homegrown order management workflow. That’s not a management failure. It’s a resource reality.

When AI-driven workforce reduction hits, the math changes fast. The developer who knew the system goes. The system stays. The knowledge doesn’t.

The call no one prepares for: “We can’t find anyone who knows how this works.”

The specific scenario that blindsides CEOs isn’t the system breaking on day one. It’s the system breaking at month three, after a minor configuration change, after a routine update, after a new hire tries to add a feature. Nobody realizes the knowledge is gone until someone needs it.

One case study published by Lazorpoint, an IT services firm, describes a CEO who grew frustrated with her head of IT. She realized, too late, that he was the only person who knew how everything worked. “IT operations people often had to call on that head of IT directly just to keep the business running.” When he gave notice and refused to assist with the transition, the business faced an operational crisis it hadn’t anticipated.

Why mid-market software systems carry a hidden single point of failure

Your internal custom software was almost certainly built by a small team, with limited documentation, optimized for shipping speed rather than knowledge transfer. When headcount shrinks, the knowledge margin shrinks with it. In many mid-market environments, that margin was already at one person before the layoff list was drafted.

The AI Layoff Math That Doesn’t Add Up

The labor cost savings looked clean on the spreadsheet. Headcount reduced, payroll trimmed, productivity maintained. The actual numbers tell a different story.

Tech shed nearly 80,000 jobs in Q1 2026: half attributed to AI

The tech industry laid off nearly 80,000 employees in Q1 2026, with almost 50% of the affected positions attributed to AI-driven restructuring, according to Tom’s Hardware’s industry-tracking data.

The scale matters for context. This isn’t a handful of companies making cautious cuts. It’s a sector-wide pattern, driven by the same thesis: AI tools can replace certain categories of work, so the humans doing that work can go. The thesis holds until the work turns out to be more complex than the AI can handle. Or until the knowledge embedded in the human’s head proves irreplaceable by the tool.

55% of companies already regret cutting: what Forrester found

Forrester Research’s Predictions 2026 report found 55% of employers already regret their AI-driven layoffs. The report, cited by HR Executive, also predicts that half of AI-attributed layoffs will be quietly rehired, typically at lower salaries or offshore, which introduces its own complications. Lost productivity and knowledge gaps are named as the primary drivers of regret.

A majority of companies that cut are already wishing they hadn’t. Not because AI failed as a concept, but because the humans they cut carried something AI couldn’t carry: context about systems that were never documented.

The rehiring boomerang: why it costs more the second time

Rehiring isn’t a clean undo. A developer who understood your integration layer doesn’t return at the same cost, under the same terms, with the same institutional knowledge intact. If they’re available at all, they’re coming back at a premium. They know their leverage. And the weeks they were absent weren’t idle: configurations changed, other team members made undocumented decisions, the system evolved in ways the returning developer must now relearn.

ClearlyAcquired’s analysis of key-person replacement costs puts the figure at 150 to 400% of annual salary, with new hires needing 16 to 20 weeks to reach full productivity. The cost isn’t just the premium salary. It’s the ramp-up time, the knowledge reconstruction, and the decisions made incorrectly during the gap.

Institutional knowledge loss in software development

The rehiring boomerang: companies that cut developers to save money frequently spend more bringing back equivalent expertise, often at a premium over the original salary.

What “Institutional Knowledge” Actually Means for Internal Software

Most CEOs have heard “institutional knowledge” in an HR context. It’s the phrase used when a long-tenured executive retires and takes 30 years of industry relationships with them. That loss is real. It’s also recoverable.

Software institutional knowledge is different. It doesn’t recover the same way. And the gap is wider than most people expect.

74% of organizations lack a formal method of capturing and retaining technical knowledge, including system knowledge, according to research cited by CAST Software.

The directional reality is consistent with what any mid-market CEO who has asked their IT team for documentation already knows: it doesn’t exist, or it’s out of date, or it covers what the system does but not why it was built the way it was.

The difference between HR institutional knowledge and system knowledge: why software is worse

When a senior sales director leaves, you lose their client relationships, market instincts, and internal influence. All recoverable. A new hire can rebuild client relationships. Overlapping experience approximates market instincts.

When the developer who built your internal CRM integration leaves, you lose accumulated decisions baked into code. Why does that API use a non-standard endpoint? Because the standard one had a rate limit that caused failures in 2023, and the fix was never documented. Why does the nightly sync run at 3 am? Because when it ran at 11 pm, it conflicted with a backup process that no longer exists, but changing the schedule broke something else. Why does staging behave differently from production? A temporary config change was applied in production during a crisis and never properly recorded.

None of that lives in a readme. It lives in a person.

What walks out the door when the developer leaves

What actually walks out: the reasoning behind architectural decisions (not just what the decision was); knowledge of which parts of the system are fragile and what triggers failure; an understanding of which “temporary” workarounds became permanent load-bearing infrastructure; awareness of integrations that don’t appear in any diagram; and the mental model of how all of it connects.

Research from docs.bswen.com on developer knowledge management puts the split at approximately 90% tacit and 10% documented. The 90% is what disappears when the developer’s last day comes.

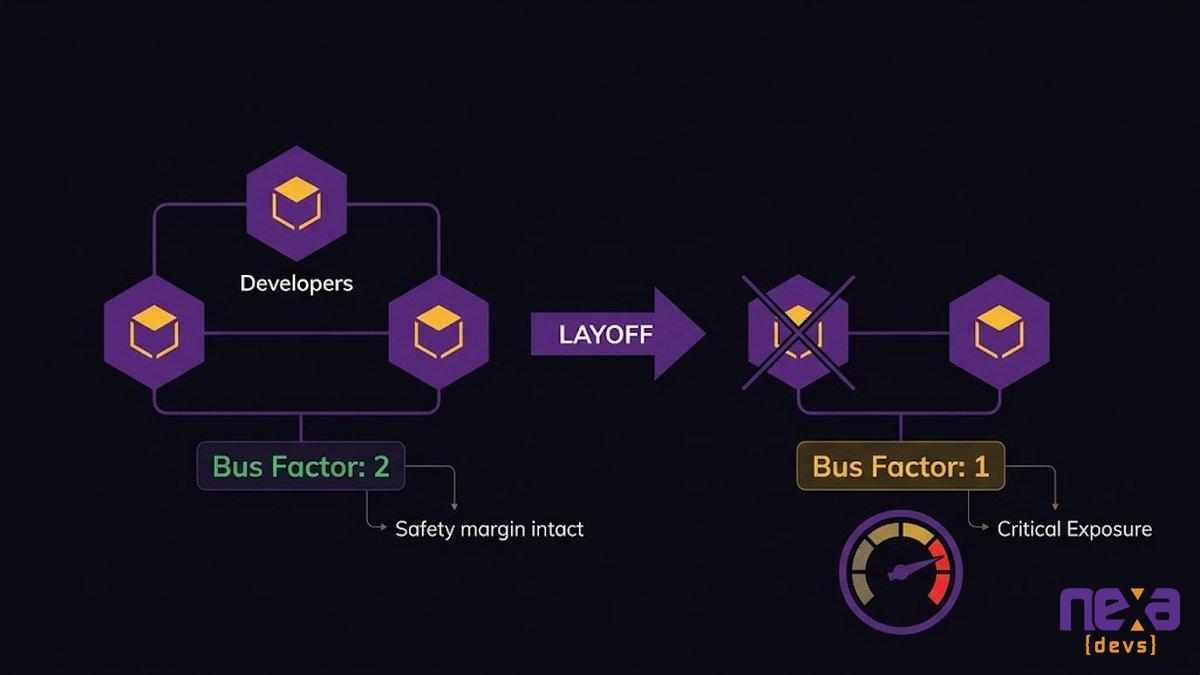

Bus Factor: The Metric Your IT Team Knows and You Don’t

Your engineering team probably knows what bus factor means. It’s the dark-humor metric from software development: how many developers need to get hit by a bus before the project collapses? Morbid framing aside, it’s a genuine risk measure. For most mid-market software systems, the answer is one.

What bus factor means and why a score of 1 is a CEO-level risk

The bus factor quantifies the concentration of knowledge in a software system. A score of 1 means one person holds enough critical knowledge that their departure renders the system unmaintainable. A score of 2 means two must leave before the system becomes inaccessible to everyone remaining.

JetBrains’ Bus Factor Explorer analysis, published by LinuxSecurity.com in March 2026, found that major open-source databases like MySQL and PostgreSQL sit at a bus factor of 2. Already classified as high-risk. Enterprise teams managing internal custom systems typically do worse. For your custom operations tooling, your integration layer, and your homegrown reporting pipeline, the bus factor is often 1.

This is a CEO-level risk because it determines the minimum viable headcount for your critical systems. Below that threshold, you don’t have a staffing problem. You have a continuity problem.

72% of companies have at least one person whose departure would significantly disrupt operations

A 2023 SHRM study found that 72% of companies report having at least one employee whose sudden departure would significantly disrupt operations. In software system terms, that disruption isn’t just organizational. It’s technical. The HR director leaving takes relationships. The developer leaving takes the system’s interpretability.

How AI-era layoffs are systematically reducing bus factor to 1

Before recent AI-driven layoffs, many mid-market teams operated with bus factors of 2 or 3 for their most critical internal systems. Not great, but survivable. When a 5-person team shrinks to 3, and the cut positions include the two developers with the deepest system context, you don’t just lose headcount. You remove the safety margin entirely.

AI tools are genuinely useful for certain development tasks. They aren’t able to explain why a specific trigger condition exists in a legacy codebase that was never documented. The code itself doesn’t contain the reason. The developer who wrote it did.

Bus factor collapses as AI-driven layoffs shrink engineering teams: a marginally safe bus factor of 2 becomes critical exposure at bus factor 1 after one or two cuts.

The True Cost: What Happens in the 90 Days After the Developer Leaves

The financial case isn’t abstract. It plays out on a timeline in two phases that most companies don’t anticipate until they’re already in both.

Within the first 90 days, the losses are operational. A deployment fails because nobody knows the environment-specific configuration that the departed developer managed manually. A data sync stops because an API token wasn’t renewed. Nobody knows which account held it. A report returns wrong numbers because a calculation change applied six months ago wasn’t reflected in any documentation.

Each incident costs time. More significantly, they erode your team’s confidence in the system and your confidence in their ability to manage it. The system that was running fine becomes the system nobody wants to touch.

Delayed losses: the features you can’t add, the compliance you can’t prove

The delayed losses are worse. From 90 days to 18 months out, you start running into the hard ceiling of what a team can do with systems they don’t fully understand.

A potential client asks for a compliance audit. You can’t produce the documentation. A regulatory change requires a modification to your data handling. Nobody knows which components to change without risking cascading failures. A growth initiative requires extending your internal tooling. The estimate comes back at three times the expected figure, because every change requires extensive reverse-engineering before the first line of new code gets written.

These aren’t edge cases. They’re the standard delayed consequences of knowledge loss from developer turnover in custom software environments.

An organization with 30,000 employees can expect to lose $72 million annually in productivity from knowledge loss caused by employee turnover, according to a figure cited by ProcedureFlow, attributed to Panopto’s workplace survey.

Scale that to a mid-market company. The proportional impact, even for a 200-person organization, is still measured in millions. That’s before accounting for the specific compounding cost of undocumented custom software systems.

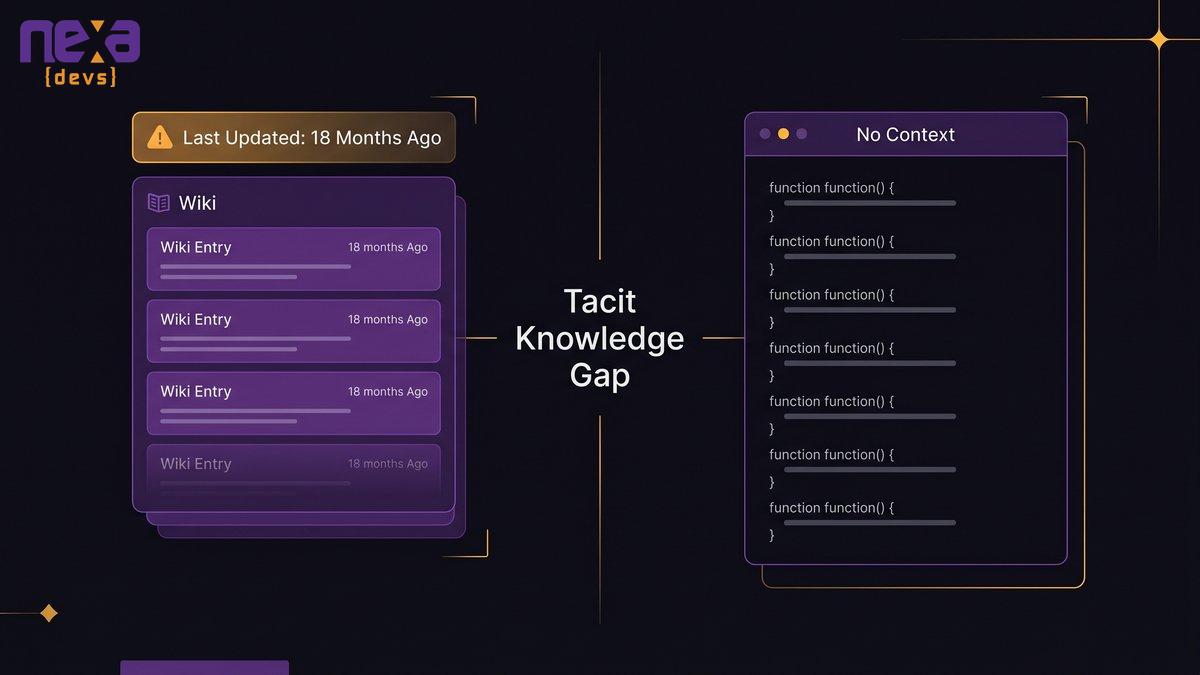

Why the Fix Isn’t Documentation Software

When CEOs confront the AI layoffs institutional knowledge gap, the instinctive response is: “Let’s document everything.” Buy a wiki. Assign someone to write it all down. Mandate a documentation sprint before any developer leaves. This is logical. It doesn’t work.

The “we’ll write it down” trap: why documentation efforts fail without ownership

Documentation efforts fail for a structural reason: nobody owns their ongoing maintenance. A wiki gets written during a project and becomes outdated within 90 days. A runbook covers the process as it existed when it was written, not as it has evolved through six months of incremental patches. Architectural diagrams reflect the initial design, not the production reality after two years of workarounds.

Accurate documentation requires the person who understands the system to maintain it continuously. Writing it once at departure is not the same thing. A developer with two weeks’ notice has no time and no incentive to produce documentation that would take months to write accurately.

What actually transfers knowledge in a software handoff

Genuine knowledge transfer in software requires three things: time, overlap, and accountability. Time means weeks of paired work, not a two-week notice period. Overlap means the incoming developer works alongside the outgoing one on live systems, not just reads documents. Accountability means someone verifies that the knowledge was actually transferred, not just that the documentation was filed.

Most departing-developer handoffs fail all three conditions. The time isn’t there. The overlap can’t happen because the replacement wasn’t hired in advance. And nobody audits whether the knowledge is transferred until the system breaks three months later.

The misplaced faith in AI to understand undocumented systems

In 2026, the response in some organizations is: “We’ll use AI to read the codebase and generate documentation.” AI coding tools are useful for annotating functions, identifying patterns, and producing basic descriptions. They can’t explain why decisions were made, which assumptions the system depends on, or which parts of the codebase are safe to modify.

AI reads the code as written. The institutional knowledge crisis is about what wasn’t written: the context, the history, the reasoning behind choices now baked in as constraints. No tool, AI-assisted or otherwise, reconstructs what was never captured.

Documentation tools create the appearance of coverage. The tacit knowledge that actually runs the system stays undocumented until something breaks and everyone realizes it wasn’t there.

What Mid-Market CEOs Are Doing Instead

Companies navigating AI-era workforce reduction without catastrophic knowledge loss have something in common: they didn’t treat documentation as a post-departure activity. They built knowledge continuity into the way the work is delivered.

The embedded team model: documentation as a deliverable, not an afterthought

An embedded development team maintains ongoing context about the systems it manages. When a developer cycles off, their replacement receives a structured handoff from colleagues who have been working on the same systems in parallel. Knowledge transfers through direct overlap, not documentation written under departure pressure.

This structural difference is decisive. Knowledge doesn’t live in one person’s head because the team has been building and maintaining it collectively. Architecture decision records get written as decisions are made, not reconstructed from memory six months later.

The resulting documentation belongs to the client company. Unconditionally. Not vendor-held records. Not knowledge accessible only through a portal. Complete technical documentation: UML diagrams, API references, architecture decision records, system design documents. Transferred to and owned by the client at project completion, regardless of whether the engagement continues afterward.

“As Ashwin Ballal, CIO at Freshworks, states: ‘When you add vendors, you are not reducing complexity. You are just moving it somewhere else, and often adding new dependencies on top of old ones.'” The same principle applies to knowledge: when documentation remains with the vendor rather than being transferred to the client, you’ve traded one knowledge dependency for another.

How nearshore AI-augmented development builds knowledge continuity into the engagement

An AI-augmented development process systematically produces documentation as a byproduct of delivery, not as an end-phase deliverable nobody has time to write. Architecture decision records, API documentation, and system design artifacts exist because the process requires them, not because someone remembered to prioritize documentation at a developer’s departure.

Nearshore teams operating in the U.S. time zone alignment maintain the communication continuity that documentation-as-process requires: real-time collaboration, daily standups, and code reviews that include documentation reviews. These are the practices that keep knowledge accessible and up to date, without relying on any individual developer’s memory.

For mid-market companies already managing knowledge gaps from completed AI-driven layoffs, this model also addresses system rescue: taking over and stabilizing internal software in poor condition, reverse-engineering the current state, and returning the system to a maintainable baseline with full documentation transfer.

Three questions to audit your internal systems’ bus factor before the next headcount decision

Before you approve any further AI-driven headcount reductions, ask these three questions about each of your internal custom software systems:

Question 1: If the developer who knows this system best leaves tomorrow with two weeks’ notice, could any remaining team member deploy a change to production without their guidance? If the answer is no, your bus factor is 1, and the system is at immediate risk.

Question 2: Does complete, current documentation exist for this system’s architectural decisions, integration dependencies, and environment configurations? Not “we have a wiki.” Documentation that a new developer could use to understand the system without interviewing anyone who worked on it.

Question 3: If this system went down at 3 am on a Saturday, who would you call? If the honest answer is someone who no longer works at your company, you have a knowledge continuity problem that the last round of layoffs made structurally worse.

Most companies skip this audit. Then run it in crisis mode after the system breaks.

How to protect institutional knowledge in software development

The Decision You Make Before the Next Layoff

There’s a version of this that goes well. Headcount gets reduced where AI genuinely covers the gap. The people whose knowledge is irreplaceable stay until that knowledge is transferred. Documentation gets built into the delivery process, not crammed into the final two weeks before someone leaves. The bus factor is audited before the reduction list is approved, not after.

That version requires asking the hard questions before the spreadsheet is finalized. Not after the call comes six weeks later, when nobody knows how the system works.

If you’re already past that point, if the layoff happened and the knowledge gaps are now visible, the structural fix is the same. An embedded development partner with documentation-as-deliverable as a contractual standard can take over systems in poor condition and return them to a maintainable state. The goal isn’t to recreate the knowledge that was left. It’s to build a structure where that failure mode can’t happen again.

Ready to audit your systems’ knowledge continuity before the next headcount decision? Talk to Nexa Devs, we work with mid-market companies on exactly this problem.

FAQ

What is the hidden cost of AI layoffs for companies?

The hidden cost is institutional knowledge loss: specifically, the system knowledge held by developers who built and maintained internal software. When those developers leave, the system becomes difficult or impossible to maintain. Forrester Research found that 55% of companies already regret AI-driven layoffs, primarily due to lost productivity and knowledge gaps that neither remaining staff nor AI tools could fill.

How do companies lose institutional knowledge when developers leave?

Developers carry tacit knowledge, architectural decisions, integration dependencies, and undocumented workarounds that are rarely written down. Research puts approximately 90% of organizational knowledge in the tacit category. When the developer leaves, that 90% disappears. Systems become unmaintainable, deployments fail, and new hires spend months reconstructing what the departing developer understood intuitively.

What is bus factor risk, and how does it affect software teams?

Bus factor measures how many team members must leave before a project becomes unmaintainable. A bus factor of 1 means one departure breaks the system. A 2023 SHRM study found 72% of companies have at least one employee whose departure would significantly disrupt operations. AI-driven layoffs systematically reduce bus factor, sometimes removing the safety margin entirely without management realizing it.

What happens to internal software systems when the developer who built them leaves?

The system remains operational initially, then becomes progressively harder to modify or extend. Every change requires reverse-engineering undocumented configurations. ClearlyAcquired’s research shows replacing high-level technical talent costs 150 to 400% of annual salary, with new hires needing 16 to 20 weeks to reach full productivity.

How do you protect institutional knowledge before laying off developers?

Three steps make a structural difference: audit your bus factor before deciding who to cut; require overlap-based handoffs with incoming developers rather than departure documentation; and build documentation into your ongoing development process. An embedded team model where multiple developers maintain shared current knowledge is structurally more resilient than individual developer arrangements.

What percentage of companies regret AI-driven layoffs?

Forrester Research’s Predictions 2026 report found 55% of employers already regret AI-driven layoffs. People Matters Global, citing Careerminds research, reported 32.9% of HR leaders said their organizations lost critical skills after AI-driven restructuring, and only 8.4% would repeat their approach unchanged.

by Sarah Mitchell | Jun 2, 2026 | AI & Innovation Hub

Legacy System AI Barrier: Why Your Stack Blocks AI (And How to Break the Deadlock)

Eighteen months. That’s how long one mid-market operations team spent trying to connect their AI tools to a legacy ERP before giving up. They weren’t missing the budget. They weren’t missing talent. What they were missing was a foundation on which AI could actually run.

The moment they modernized the underlying system, AI-assisted reporting was up and running in the first sprint. Same team. Same AI tools. Completely different result.

That’s not a coincidence. The legacy system AI barrier is structural, and most AI vendors have no incentive to tell you about it before they sell you a seat.

You’ve Tried AI. It Didn’t Work. Here’s the Part No One Told You.

The AI pilot ran for six months. The demo worked. The vendor was responsive. Then you tried to connect it to real data, and the integration broke. Or the outputs were unreliable because the underlying data was fragmented. Or it worked in isolation but couldn’t talk to the three other systems that would have made it useful. So the pilot wound down quietly, categorized as “not the right moment.”

That pattern, across thousands of mid-market companies right now, isn’t bad luck. It’s architecture.

A familiar scene in mid-market operations: a pilot that worked in the demo environment hits the wall of legacy integration.

The pilot that never made it to production

AI tools are built to run on specific conditions: clean, accessible data in near-real time; APIs that accept and return structured responses; and an architecture that allows an event in one system to trigger an action in another. When those conditions exist, AI works. When they don’t, it can’t, regardless of how good the model is.

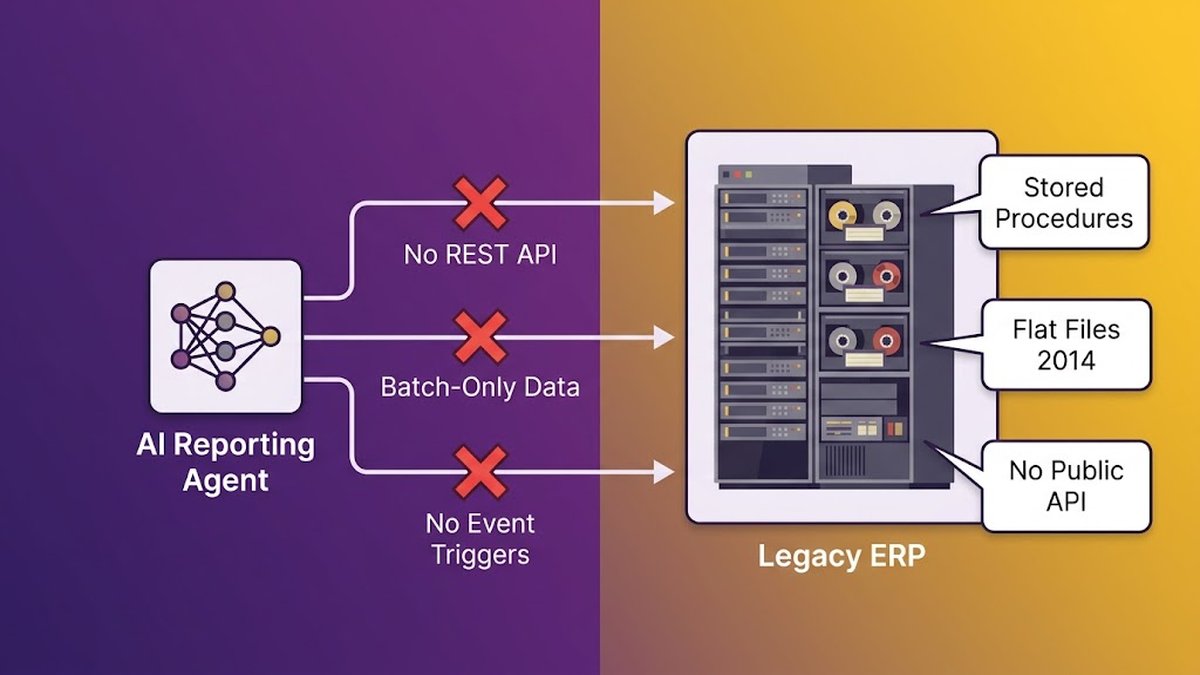

A mid-market company running a 12-year-old ERP typically lacks those conditions. Data sits in siloed tables with no public API. Business logic is buried in undocumented stored procedures. Reports are generated by querying flat files that were last redesigned in 2014. An AI agent dropped into this environment doesn’t fail because the AI is bad. It fails because the environment physically can’t give it what it needs.

The AI vendor won’t tell you this on the first call. Their demo environment is clean. Their integrations point at structured test data. By the time you discover the gap, you’ve already bought the license.

Why AI vendors don’t lead with the uncomfortable truth

AI tool vendors sell features and capabilities. Telling a prospect “your infrastructure might need 18 months of work before you can use this” is not a sales accelerator. So they don’t say it. They describe “integrations” that require your system to have an API endpoint. They show dashboards that assume your data is already normalized. They talk about “connecting your existing stack” as if that connection is trivial.

For a modern, cloud-native stack, it often is trivial. For a legacy system that pre-dates API conventions, it isn’t. The legacy system AI barrier isn’t a feature gap. It’s an architectural prerequisite the tool can’t provide for itself.

What Your Legacy Stack Is Actually Costing You (In Numbers)

Before talking about AI, there’s a more immediate number worth examining. Organizations allocate 70% of IT budgets to maintaining legacy systems, according to data confirmed by Ideas2it, leaving almost nothing for new capabilities. Not 20%. Not 40%. Seventy percent.

For a mid-market company with a $2M annual IT budget, that’s $1.4M a year spent keeping an existing system running. The remaining $600K has to cover security, upgrades, new tools, and any innovation the business actually wants to pursue. It’s not a development budget. It’s a maintenance contract.

The maintenance tax: 70-80% of IT budget going nowhere

That 70% figure isn’t a ceiling, it’s often a floor. At the higher end of legacy-heavy environments, the ratio shifts to 80%. Ray Forte, an executive at Analog Devices, described his situation plainly: the calculation came back “in the low 80s” when he asked what percentage of IT spend was simply keeping the lights on.

This is what we call the maintenance tax. It’s not interest on a loan you can pay off. It’s a permanent structural levy on your ability to invest in the business. Every sprint your engineering team spends patching an aging codebase is a sprint they didn’t spend building something that compounds in value.

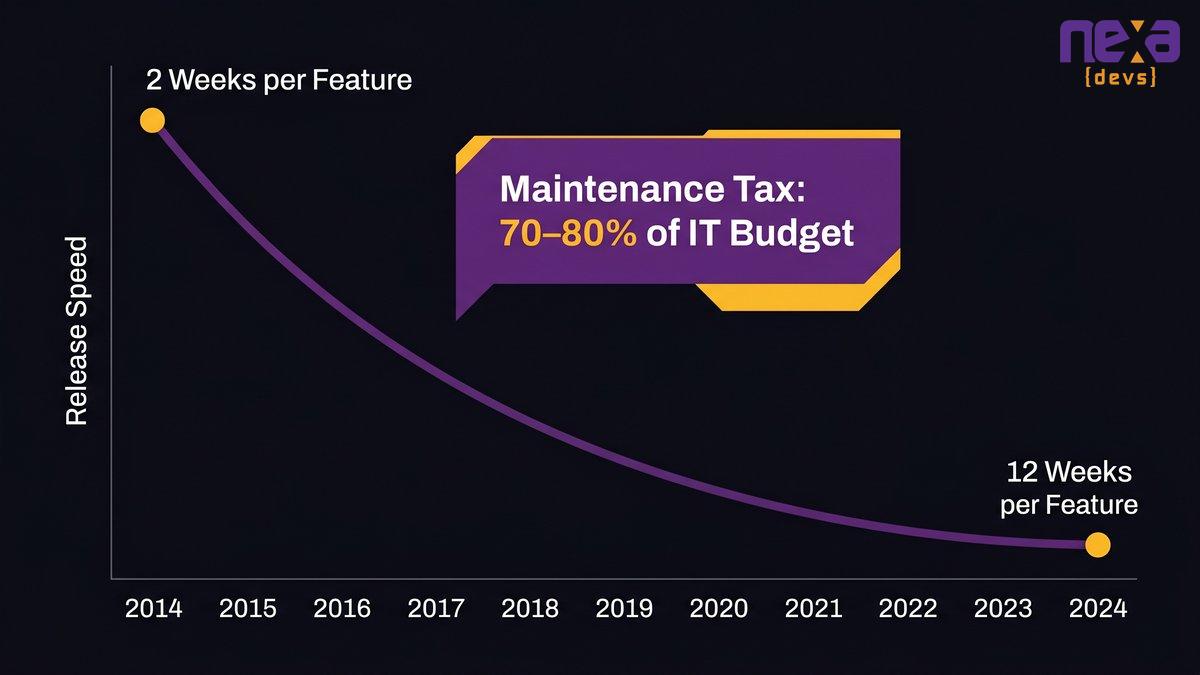

Feature velocity: when 2-week releases become 12-week releases

The maintenance tax has a secondary consequence that CEOs feel even more acutely than CFOs: features slow down.

One unnamed CEO client of a mid-market software modernization firm described it this way: “Features used to take two weeks to push three years ago. Now they’re taking 12 weeks. My developers are super unproductive.” That’s not a performance management problem. That’s what a tightly coupled codebase does to a team over time: every new feature requires understanding the blast radius of touching a system where nothing is documented, and everything is connected to everything else.

Feature velocity doesn’t decline linearly. It compounds downward as the codebase accumulates dependencies.

The compounding cost of delay

Here’s the dynamic that makes this genuinely dangerous: every quarter you don’t address the underlying architecture, both costs go up. The maintenance burden grows as the gap between the legacy system and modern tooling widens. The feature tax grows as developers spend more time navigating an increasingly complex codebase. And the AI readiness gap compounds independently on top of both of those curves.

Waiting is not a neutral choice. It’s an active cost decision made by inaction.

Technical debt cost

Why AI Cannot Run on a Foundation It Was Never Built For

Deloitte’s 2026 Tech Trends report found that nearly 60% of AI leaders view legacy-system integration as the primary barrier to agentic AI adoption. Not insufficient budget. Not missing talent. The infrastructure itself.

This isn’t a soft barrier. It’s a hard technical incompatibility.

What agentic AI actually needs: real-time data, APIs, event-driven architecture

Agentic AI, the kind that automates workflows, generates reports, monitors operations, and makes decisions, requires three things from the underlying system it connects to:

Real-time data access. An AI agent that queries a database replicated once per day isn’t actually intelligent; it’s working with yesterday’s information. For agentic workflows (automated anomaly detection, dynamic reporting, AI-assisted approvals), the data layer must be live or near-live. Legacy ERPs built on batch-processing architectures weren’t designed for this.

Callable API endpoints. AI agents interact with other systems by calling endpoints and reading structured responses. If your ERP doesn’t expose modern REST or GraphQL APIs, the agent has no legal way to get data out or push decisions in. Some integrators work around this using screen scraping or RPA tools, but those are bridges, not solutions. They break whenever the UI changes and accumulate their own maintenance burden.

Event-driven triggers. The most useful AI agents don’t wait to be asked; they respond to events. A new order is created. A threshold is crossed. A document is submitted. Legacy systems built around polling architectures and batch jobs can’t fire events because they were never designed to. They produce data; they don’t announce that data has changed.

When an AI integration fails, the instinct is to blame the AI tool. Wrong direction. The AI tool is usually working exactly as documented. What failed is the contract between the AI tool and the legacy system, and that contract requires the legacy system to provide something it structurally cannot.

This is why API wrappers only solve part of the problem. A wrapper can expose read access to legacy data through a modern API endpoint. It can’t give you real-time events from a batch-processing system. It can’t clean fragmented, inconsistent data at the source. The underlying architectural constraints remain.

The 60% barrier: when integration is the primary blocker, not skill or budget

The 60% figure from Deloitte deserves examination as a signal rather than just a statistic. These are AI leaders at companies with the budget, the strategy, and presumably the talent, yet they’re still blocked. What’s blocking them isn’t something they can hire their way out of. It’s architectural. The systems their AI needs to integrate with weren’t built for it.

Mid-market companies face this problem with fewer resources than the enterprises Deloitte surveyed. The constraint is sharper, the margin for error smaller, and the window to address it is shorter.

AI readiness gap

The 18-Month Trap: Why Mid-Market AI Pilots Never Reach Production

92% of mid-market AI strategies stall at the architecture phase, not the model selection phase, not the talent phase, not the budget phase, according to CetDigit’s analysis. The architecture phase. The part where you discover that the AI tool you bought can’t actually reach the data it needs.

This is the 18-month trap. Companies cycle through it in predictable stages.

From isolated experiment to structural barrier

Month one: the vendor demos the product. Data flows beautifully in the demo environment. The use case is compelling. The contract gets signed. Months two and three: your team starts the integration. They discover the legacy ERP doesn’t have an API for the data the AI tool needs. They built a workaround. Months four through eight: the workaround works in staging but fails under load, or produces inconsistent data, or breaks when the ERP vendor pushes an update. Months nine through twelve: a third-party integration consultant is brought in. They built a more robust bridge. It costs more than the AI tool license. Month eighteen: the pilot is still in staging, the original use case has drifted, and the team is quietly deprioritizing it for Q3.

That’s not a failure of execution. That’s a structural barrier presented as a project problem.

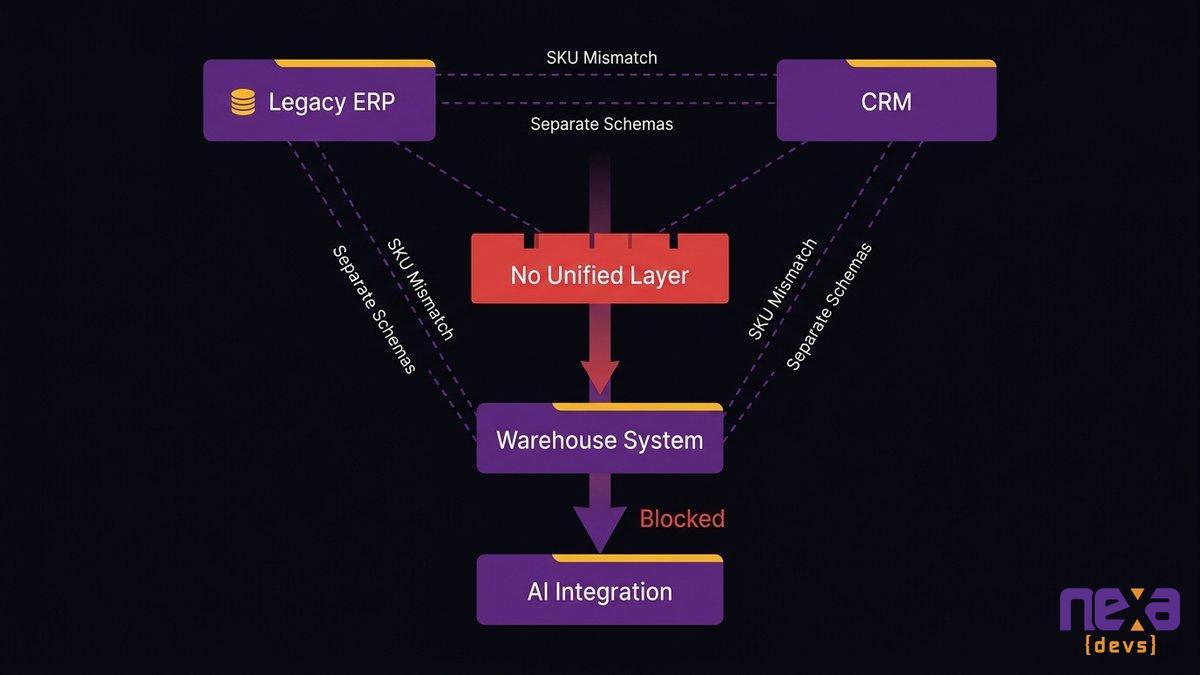

Data that can’t talk to itself can’t talk to AI

The specific bottleneck in most mid-market AI failures is data fragmentation. The customer record in the CRM doesn’t match the customer record in the ERP because they were entered separately and never reconciled. The inventory data in the warehouse system uses a different SKU schema than the finance system. The operational data from the field is collected in spreadsheets that get uploaded manually twice a week.

An AI tool can’t reconcile this fragmentation. It can only report on it or fail against it. Before AI can generate useful output, the data it reads has to mean the same thing across systems, and in most mid-market legacy environments, it doesn’t.

Most mid-market environments have three or more systems with separate data schemas and no unified layer for AI integration.

Why 92% of mid-market AI strategies stall at the architecture phase

The 92% figure from CetDigit is specific: the stall happens at the architecture phase. Not later. Not during model fine-tuning. At the point where teams realize the underlying system can’t support what they’re trying to build.

This pattern is the clearest evidence that the problem isn’t AI readiness in the abstract sense. It’s infrastructure readiness in the very specific sense: does your system have the APIs, the data quality, and the architectural patterns that AI integration requires? For most mid-market companies running systems built before 2015, the answer is no.

The RSM 2025 AI Survey found that 53% of middle market firms feel only somewhat prepared to implement AI, with another 10% not prepared at all. These aren’t companies that don’t understand AI. They’re companies that understand, accurately, that their infrastructure isn’t ready for it.

What Breaking the Deadlock Actually Looks Like

When a mid-market team acknowledges the architecture problem, they typically see two options. Neither one works particularly well in isolation.

The problem with “AI first, modernize later.”

Some companies try to run the AI layer over the existing system using API wrappers, middleware connectors, and RPA bridges. This works, partially, temporarily. You get some AI capability at the cost of a fragile, expensive integration layer that needs its own maintenance budget. Every legacy system update risks breaking the bridge. Every new AI use case requires another round of custom integration work.

More fundamentally, this approach doesn’t fix the underlying problem. The data quality issues remain. The batch-processing architecture remains. The lack of event-driven triggers remains. You’re not building AI capability; you’re building infrastructure to approximate AI capability while deferring the real work.

The problem with “modernize everything, then add AI.”

The alternative, modernize the full system before touching AI, sounds more logical, but it has its own failure mode. Full modernization projects for mid-market systems typically run 18 to 36 months and cost far more than initial estimates. Gartner reports 70% of legacy modernization programs exceed budget by 30% or more.

By the time the modernization is complete, the AI landscape has shifted. The use cases you designed for in year one are different from the ones that matter in year three. The AI tools your team evaluated during scoping may have been superseded. You’ve spent 30 months building the runway and the planes have changed.

The third path: modernize the foundation and embed the AI in the same engagement

The approach that actually breaks the deadlock is neither of those. It’s treating modernization and AI integration as a single engagement rather than two sequential projects.

This is how it works in practice: you don’t modernize everything first and then add AI. You identify the specific architectural barriers blocking the AI use cases that matter most, modernize those components incrementally, and build the AI integration directly into the newly modernized layer as you go. Each modernization phase unlocks a new AI capability. Nothing gets built twice.

The operations team we described at the start of this post went through exactly this process. They didn’t spend 18 months modernizing their ERP before touching AI. They worked with a partner who identified the specific integration wall, the reporting module, modernized that layer, and had AI-assisted reporting running in the first sprint. The rest of the ERP modernization continued in parallel, each phase unlocking the next AI capability on the roadmap.

That’s the model. Not AI-first-then-modernize. Not modernize-everything-then-add-AI. Both outcomes, delivered in one engagement, sequenced by what the AI roadmap actually needs.

Legacy AI integration

Incremental Modernization vs. Full Rewrite: The Decision Getting Mid-Market CTOs Wrong

Most CTOs facing a legacy modernization decision frame it as binary: modernize incrementally, or rewrite completely. The right answer is almost always incremental. A full rewrite is rarely the correct choice for a mid-market system, and when it is, the reasons have nothing to do with AI readiness.

The strangler fig pattern explained for non-developers

The strangler fig is the canonical pattern for incremental legacy modernization. The name comes from a tree that grows around an existing structure, gradually replacing it without ever requiring the original to go offline. In software terms, you build new, modern components alongside the legacy system and route traffic to them as they’re validated, without ever taking the legacy system down for a full replacement.

For a mid-market CEO, the practical implication is this: your team keeps shipping, your operations keep running, and the legacy system is progressively replaced by modern architecture. No big-bang cutover. No six-month development freeze. No single catastrophic risk event.

What incremental modernization actually costs and how long it takes

Incremental modernization for mid-market core systems typically requires 3 to 6 months per major component and costs significantly less than a full rebuild. The timeline depends on component complexity, data migration scope, and the degree of undocumented dependencies, the last of which is almost always higher than initial estimates suggest.

The relevant comparison isn’t “how much does incremental modernization cost” but “how much does it cost relative to continuing to pay the maintenance tax while the AI opportunity compounds.” At a 70% maintenance budget allocation, the question becomes: how many quarters does the current situation have to continue before it costs more than the modernization?

When a full rewrite is the right answer (and when it’s not)

A full rewrite makes sense in three specific situations: when the existing system is so deeply undocumented that incremental modernization would require rebuilding it to understand it; when the technology stack is genuinely end-of-life with no incremental migration path; or when the business model has changed so completely that the existing system shares no meaningful logic with what needs to be built.

In mid-market software, those conditions are rare. Most legacy systems can be modernized incrementally. The CTO’s instinct toward a full rewrite is often driven by the frustration of working in a poorly documented codebase, which is real and understandable, but not a sufficient reason to accept the financial and operational risk of starting from zero.

The big-bang rewrite is the riskiest path. For mid-market organizations, it’s almost never the right one.

How to Know If Your Stack Is the Real Barrier (A Self-Audit for CEOs and CTOs)

Before engaging a vendor or budgeting a modernization, you can diagnose the problem yourself. The following five questions don’t require a technical audit, they require honest answers from the people who work in the system daily.

The self-audit takes an afternoon. The answers will tell you more than a vendor’s discovery phase.

Five questions that reveal your AI readiness gap

1. If you wanted to show a live dashboard of today’s operational data, how long would it take to build?

If the answer is “weeks” or “we’d need to write a custom script,” your data layer isn’t accessible enough for AI. Real-time AI reporting requires real-time data access. If you can’t build a basic live dashboard, you can’t build AI-driven analytics.

2. When your CRM or ERP vendor releases an update, do integrations break?

If the answer is “sometimes” or “we have to check,” your integrations are brittle. AI tools can’t operate on brittle integrations; they need stable, predictable data contracts. Brittle integrations aren’t an IT operations problem. They’re an architectural signal.

3. Can your developers add a new data field to a core object without fear of breaking something else?

If the answer involves phrases like “we have to trace all the dependencies first” or “we usually do it at night in case something breaks,” your codebase is tightly coupled in ways that will make AI integration significantly more expensive than any vendor’s estimate suggests.

4. Is there documentation that would allow a new developer to understand the system’s architecture in a week?

No documentation means no AI. Literally: AI-assisted development tools work on documented, navigable codebases. But more practically, the lack of documentation means the AI integration work will cost significantly more because every step requires archaeological work. If the team doesn’t know what they have, neither will the AI tool.

5. Have you tried to connect any AI tool to your core systems in the last two years? What happened?

If the answer involves “we’re still working on the integration” or “we deprioritized it,” you’ve already hit the legacy system AI barrier. The pilot didn’t fail because the AI was wrong. It failed because the foundation wasn’t ready.

Red flags in your current architecture

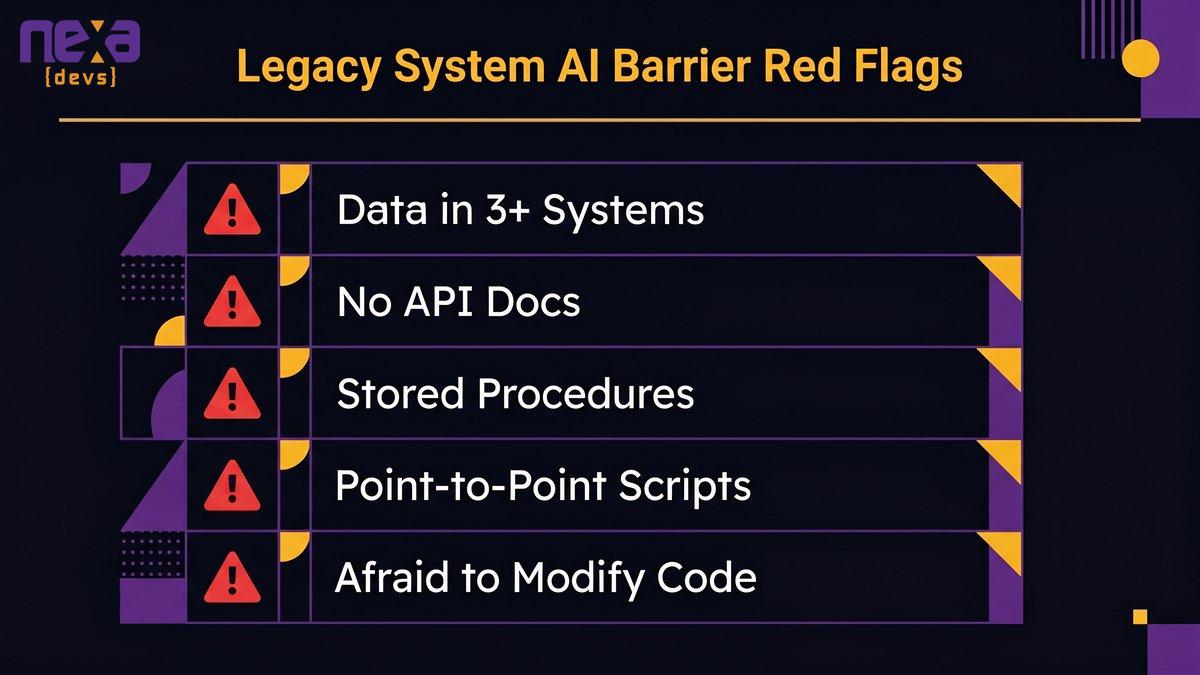

Any of the following conditions indicates a legacy system AI barrier requiring architectural work before AI integration will succeed:

- Data split across more than three systems with no master data management layer

- Core business logic embedded in database stored procedures that nobody has reviewed in five years

- Integrations built as point-to-point custom scripts rather than through an integration layer

- No API documentation for core systems (or no APIs at all)

- Developers who are afraid to modify certain parts of the codebase

What readiness looks like at mid-market scale

AI readiness doesn’t require a complete cloud migration or a microservices rewrite. At mid-market scale, readiness means: your core data is accessible through a modern API, your key entities are consistent across systems, and your architecture can accept an event-driven trigger without a custom build for every new use case. That’s achievable incrementally, without disrupting operations, in a reasonable timeframe.

[INTERNAL_LINK: anchor text “AI readiness assessment” → /blog/ai-readiness-assessment-guide]

The Two-Year Window You Can’t Afford to Miss

As Skylar Roebuck, CTO at Solvd, stated in The Tech Panda: “Traditional modernization tends to over-index on protecting how things work today rather than building for what’s next. AI capability is compounding rapidly, and the real risk for mid-market companies is delay.”

That statement has a specific mathematical implication. AI capability compounds. Your legacy system’s value doesn’t.

The competitive gap that opens when AI-native competitors move first

The companies that are modernizing now aren’t doing it because they have excess budget. They’re doing it because they understand the competitive dynamic. When an AI-native competitor can ship a new feature in two weeks and your team needs twelve, the gap isn’t just operational, it’s directional. They’re compounding in the right direction.

Gartner predicts 40% of agentic AI projects will be canceled by 2027 due to infrastructure constraints. The companies that survive that cancellation rate won’t be the ones with the best AI strategy. They’ll be the ones whose infrastructure could support the AI they tried to deploy.

The mid-market companies that break the legacy-AI deadlock in the next 24 months will exit that window with compounding AI capability and a modernized architecture. The ones that don’t will enter that same window, having watched competitors capture market share with capabilities that their stack simply couldn’t support.

Why delay compounds: each quarter deferred raises modernization cost

The modernization cost calculation gets worse with time, not better. Every quarter that passes, the gap between your legacy system and the modern tooling it needs to integrate with grows wider. Dependencies accumulate. Undocumented logic compounds. Engineers who know the system move on. The contractor who built the 2012 ERP customization retires. The knowledge required to modernize safely becomes thinner and more expensive to reconstruct.

Waiting twelve months doesn’t defer a fixed cost. It raises the cost by 15–25% while simultaneously narrowing the window of competitive opportunity.

What “AI-ready” looks like by 2028, and what happens if you’re not there

By 2028, the competitive baseline in most mid-market industries will include AI-assisted operations as a standard capability, not a differentiator. Companies that are running AI-assisted reporting, automated exception handling, and AI-accelerated development workflows will treat those capabilities as table stakes. Companies still running batch-processing ERPs from 2012 won’t be competing on AI strategy, they’ll be competing on cost, and losing.

The window to make the foundational investment at a manageable cost is the next 24 months. After that, the modernization becomes more expensive, the AI gap becomes more pronounced, and the competitive cost of delay becomes structural rather than recoverable.

The Foundation Is the Decision

Your AI strategy isn’t blocked by the AI tool you chose or the consultants you hired. It’s blocked by the infrastructure that those tools have to run on. Two weeks per feature became twelve weeks because the stack accumulated a decade of undocumented complexity. The AI pilot ran for eighteen months and never reached production because the ERP couldn’t provide what the AI tool required.

The fix isn’t another AI vendor conversation. It’s an architectural one.

The companies winning the AI race right now aren’t the ones with the most sophisticated models. They’re the ones whose underlying systems can actually run them. That’s an achievable state for mid-market organizations, but not with an off-the-shelf AI layer bolted onto a legacy ERP. It requires fixing the foundation first, and fixing the foundation while building the AI capability on top of it.

Both outcomes are one engagement. That’s the path through.

Read how a mid-market operations team eliminated the AI readiness gap

Ready to find out if your stack is the real barrier? Schedule an architecture assessment with Nexa Devs to map your legacy system against your AI roadmap, and see exactly which components need to change before your next pilot.

by Sarah Mitchell | May 28, 2026 | Business and Technology

Code Ownership Contract: Who Really Owns Your Software?

You paid for it. Your team spent months in requirements sessions, sprint reviews, and UAT cycles. The vendor delivered. The project closed. You moved on.

Then something changed. You needed to modify the product. Or a competitor made an acquisition offer. Or your vendor went quiet. And someone in legal asked a question that stopped the room: “Do we actually own this code?”

The answer, for a startling number of mid-market companies, is no. Or at minimum, not clearly. A code ownership contract is not automatically created by payment. It requires specific language. Without it, U.S. copyright law hands ownership to the developer by default. Paying for development gives you a working product. It does not give you the legal right to do anything you want with it. Those are two different things.

This guide covers the specific contract clause that determines ownership, the exact language that transfers it (and the wording that doesn’t), real scenarios where the gap has cost companies serious leverage, and what to require in any vendor agreement before you sign.

The Default Rule No One Tells You: Your Vendor Owns the Code Until a Contract Says Otherwise

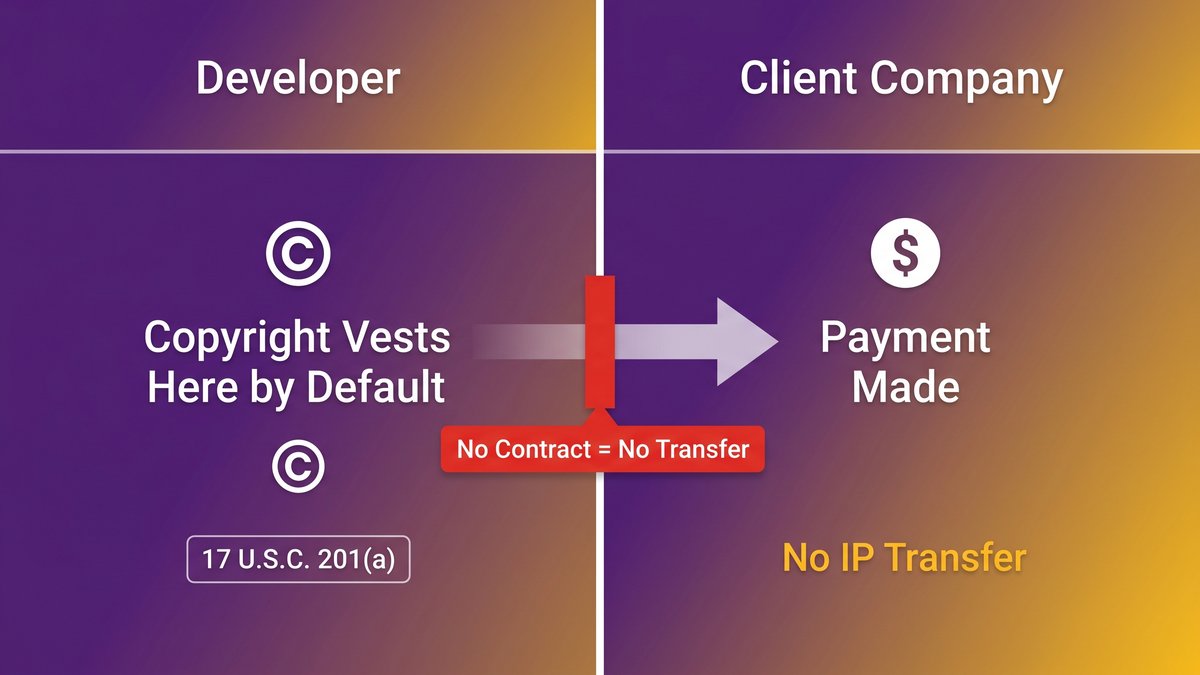

Under U.S. copyright law, the person who creates a work owns it. Full stop. Section 17 U.S.C. 201(a) establishes that copyright ownership vests initially in the author. In outsourced development, that means the developer, not the client who paid for it.

This surprises executives every time. The intuition is that commissioning work equals owning the result. It doesn’t. Not under U.S. copyright law. Not without a contract that explicitly says otherwise.

What U.S. Copyright Law Says About Contractor-Written Code

A visual comparison of who holds copyright by default under U.S. law versus what a contract assignment clause changes.

When an employee writes code, the company owns it under the “work made for hire” doctrine: employment creates an automatic transfer of IP rights. Contractors are different. An independent developer or an outsourced vendor working under a services agreement is not an employee. They own what they build unless the contract transfers ownership to you.

The law is unambiguous on this point, and it doesn’t care about your invoice history or the number of Zoom calls you attended.

Why “We Paid for It” Doesn’t Mean You Own It

The common assumption is that payment creates ownership. It creates an obligation, sometimes a license, but not a transfer of intellectual property. You may have the right to use the software as delivered. You likely do not have the right to modify, sub-license, resell, or build additional products on top of it without the vendor’s consent.

Possession and IP ownership are also distinct. Having access to code files is not the same as owning the legal rights to that code. A vendor can hand over a GitHub repo while retaining the IP. The distinction isn’t technical. It’s contractual.

For the parallel risk of owning code without documentation, see: “Outsourcing Software Development: Why Documentation Is the New Competitive Advantage.“

Work for Hire: What It Covers, What It Doesn’t, and Why Software Falls in the Gap

“Work for hire” sounds like a complete solution. Commission work, receive ownership. But the doctrine has specific legal requirements, and software written by independent contractors doesn’t meet them automatically.

The Nine Categories That Define Work for Hire (and Why “Software” Isn’t Always One of Them)

U.S. copyright law defines two situations where a work qualifies as “made for hire.” First: work created by an employee within the scope of employment. Second: work specially ordered or commissioned, but only if it falls into one of nine specific statutory categories AND the parties sign a written agreement calling it “work for hire.”

Those nine categories include things like contributions to collective works, compilations, instructional texts, and translations. Custom software written for a client’s internal use does not appear on that list by default. A contract can include “work for hire” language, but without a written agreement and a qualifying category, the classification doesn’t hold.

Even when “work for hire” language is in the contract, courts have questioned whether custom software actually fits the statutory categories. That legal uncertainty is the gap.

The Contractor Exception: When Independent Developers Fall Outside Work-for-Hire

An independent contractor (a freelancer, a boutique dev shop, a nearshore vendor) is not an employee. The automatic work-for-hire rule that applies to employees does not apply to them. Every piece of software they write for you defaults to their ownership unless you contract specifically for IP transfer.

This is the scenario 39% of mid-market companies find themselves in after delivery. According to SmallBizClub (via Netcorp Software Development), 39% of IT outsourcing projects fail due to poor planning. Inadequate IP provisions are a structural planning failure, not an execution one.

The fix is not to rely on “work for hire.” The fix is an assignment clause.

The Assignment Clause: The Exact Language That Transfers Ownership (and the Wording That Doesn’t)

The assignment clause is where the code-ownership contract is actually executed. Get this right, and you own the product. Get it wrong, and you’ve paid for a license, not an asset.

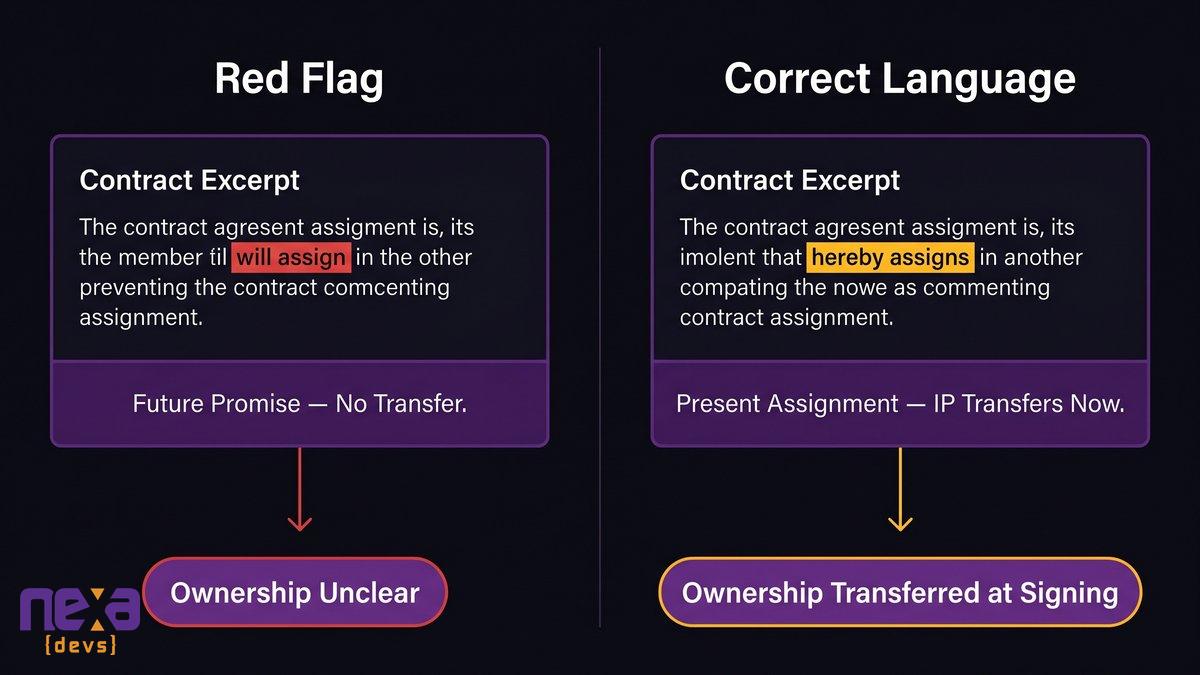

Side-by-side contract language comparison: a present assignment clause versus a promise-to-assign clause, with the legal consequences of each.

Promise to Assign vs. Present Assignment: Why One Word Changes Who Owns the Product

This distinction was cemented in federal case law. In Advanced Video Techs. LLC v. HTC Corp. (Federal Circuit, 2018), the court ruled that a contract clause stating the developer “will assign” IP constitutes only a promise of future transfer, not an actual present assignment. The practical consequence: the IP transfer doesn’t happen automatically when the project closes.

A present assignment uses a different language. “Hereby assigns” or “does hereby assign” creates the transfer at the moment of signing. No additional action required. No future obligation to fulfill. The IP moves to you when the ink is dry, not later.

The difference in writing is often a single word. “Will assign” versus “hereby assigns.” The business consequence is enormous.

Contract Language That Actually Works, and Red Flag Phrases to Reject

Language that transfers ownership:

– “Vendor hereby assigns to Client all right, title, and interest in and to the Work Product, including all intellectual property rights therein.”

– “All Work Product created under this Agreement shall be and is hereby assigned to Client upon creation.”

Red flag language to push back on:

– “Vendor agrees to assign” (future promise, not present transfer)

– “Vendor will provide Client with a license to use the Work Product” (you’re getting a license, not ownership)

– “Client shall have a perpetual, irrevocable license…” (a license, even a broad one, is not ownership)

– No IP section at all (silence defaults to the developer)

An IP assignment clause that transfers “all right, title, and interest,” including “intellectual property rights,” is the minimum standard. Anything less warrants a conversation with legal before signing.

For a vendor selection framework that includes IP screening, see: “Staff Augmentation vs. Dedicated Team: Who’s Accountable?“

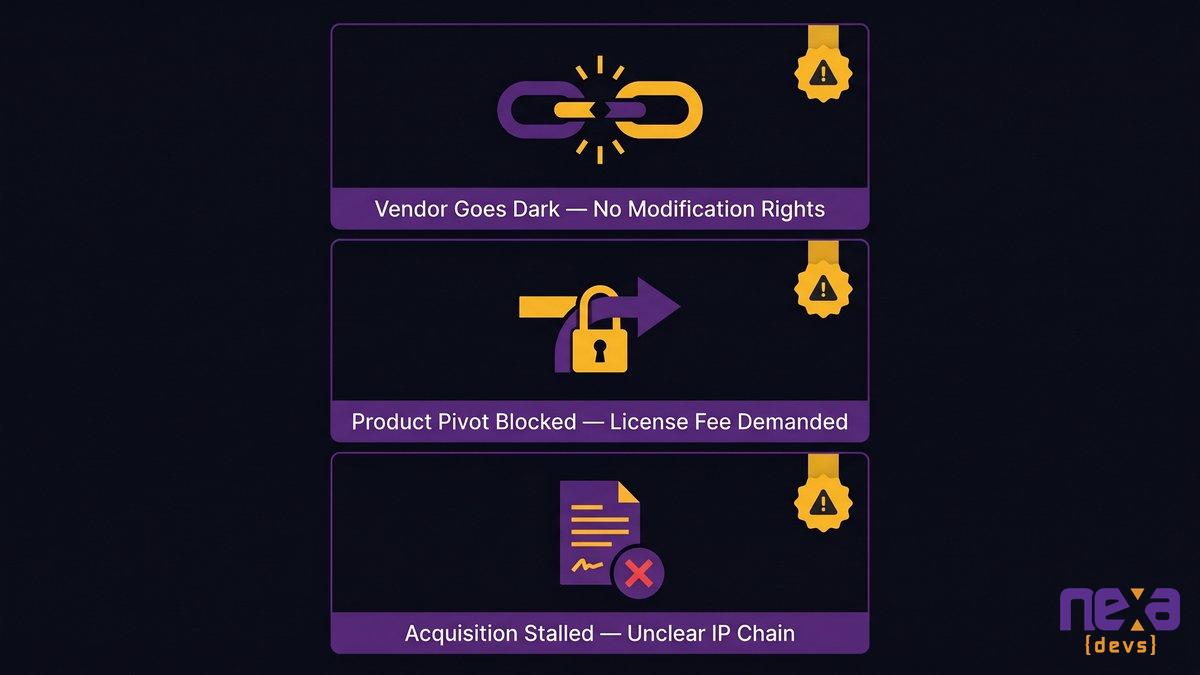

What Happens When the Clause Is Missing: Real Scenarios Mid-Market Companies Face

Abstract legal risk becomes real when the vendor relationship changes, the product needs to evolve, or an acquisition shows up. Here are the three scenarios mid-market CEOs and CTOs most commonly encounter.

Scenario 1: Vendor Delivers, Goes Dark. Who Controls the Codebase?

The vendor finishes the project. The engagement closes. Six months later, a critical bug surfaces. Your team can’t modify the code because the vendor retained IP rights. You can’t bring in another developer without the original vendor’s consent. The vendor isn’t responsive. Or worse: they’ve pivoted to a new business model and want a fee to grant modification rights.

You’re not stuck because your team lacks skills. You’re stuck because you don’t own the software you’re running.

S3Corp’s industry analysis notes that between 50% and 70% of software outsourcing projects miss their original scope, budget, or timeline. Operational lock-in after delivery is a direct consequence of the same planning failures that produce those outcomes.

Scenario 2: Company Wants to Pivot the Product, Vendor Demands License Fees

Your market moved. The internal tool needs new capabilities, or you want to spin off a product line. Your legal team discovers that the original development contract left IP with the vendor. Any modification requires their consent. Any derivative product requires a license negotiation.

You’ve built on a foundation you don’t own.

The vendor isn’t necessarily acting in bad faith. They may simply be enforcing the signed contract. But the leverage is entirely theirs, and the cost of extracting yourself comes entirely from your budget.

Scenario 3: Acquisition Due Diligence Uncovers Unclear IP Chain

An acquirer shows up. Their legal team runs IP due diligence. They discover the code ownership contract either doesn’t exist or contains “will assign” language rather than a present assignment. The IP transfer was never actually completed.

The deal stalls. The acquirer wants price adjustments or representations and warranties that the founders can’t honestly make. Some deals die here entirely. Others close at reduced valuations with expensive indemnification provisions attached.

An unclear IP chain is one of the most common due diligence deal-killers in software company acquisitions. By the time an acquisition offer appears, it’s too late to fix the original contract.

Three common risk scenarios showing the business consequences of a missing or incomplete IP assignment clause.

Documentation Transfer: The Second Ownership Problem Most Contracts Ignore

Owning the code is necessary. It’s not sufficient. A codebase without its documentation is an asset you legally own but practically can’t operate.

Why Owning the Code Without the Documentation Leaves You Operationally Dependent

A mid-market CTO who receives a GitHub repo at project close owns the source files. But without architecture diagrams, API documentation, system design decisions, and onboarding materials, their team can’t extend the system, debug production issues with confidence, or hand it off to a new vendor if the relationship ends.

As Dreamix’s research on vendor transitions notes, documentation gaps, undocumented dependencies, and lost configuration details create expensive problems months after transition completion. Legal IP ownership doesn’t resolve operational dependency on the people who built the system.

You can own the code and still be dependent on the vendor who understands it. That dependency becomes visible only when something breaks or the relationship ends.

What a Complete Handover Actually Includes

A complete documentation transfer covers at a minimum:

– UML architecture diagrams and system design documents

– Architecture decision records (why key technical choices were made, not just what was chosen)

– API references (Swagger/Postman collections)

– User story libraries and sprint documentation

– Test coverage reports and QA artifacts

– Deployment and configuration documentation

The most commonly omitted item is architecture decision records. Those capture the reasoning behind design choices. Without them, the team inheriting the system has the what but not the why. And “why” is exactly what they need when something breaks or needs to change.

Documentation transfer should be a contractual obligation with defined deliverables, not a best-effort handoff at project close.

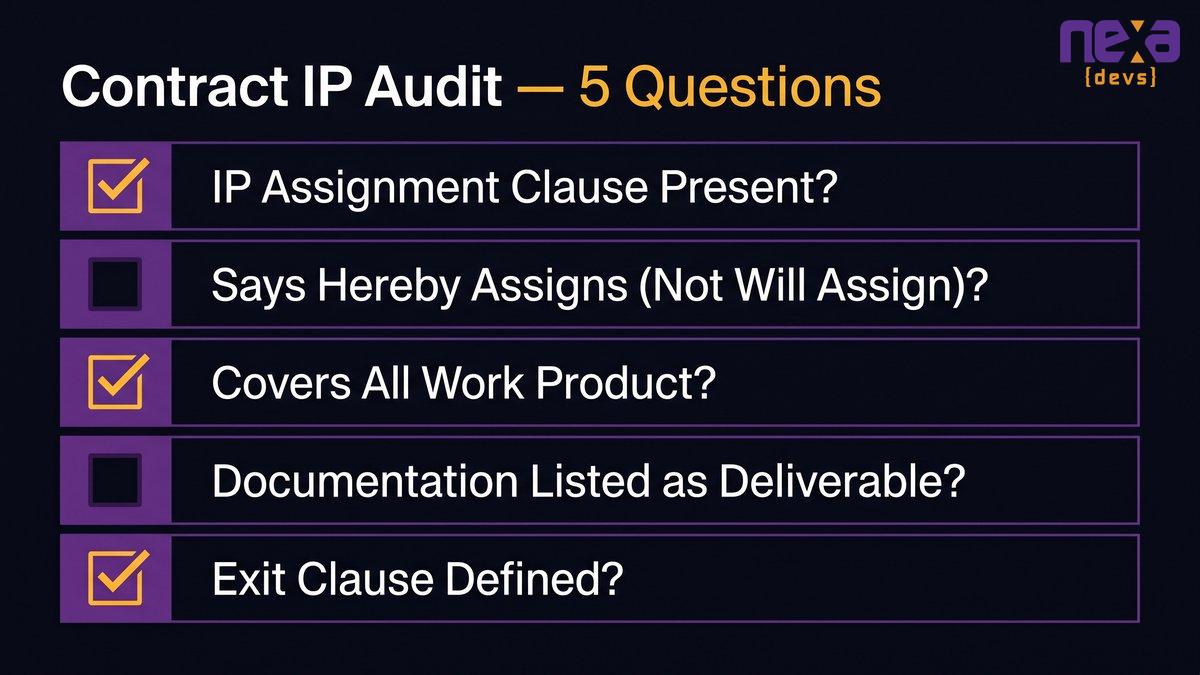

How to Audit Your Current Vendor Contract Before It Becomes a Problem

A checklist-style visual showing five contract sections a CEO or CTO should review with their legal team before an issue surfaces.

If you have an active vendor engagement or a recently closed project, spend 30 minutes with your legal team on these questions. Not when an issue surfaces. Now.