Table of Contents

AI-Augmented SDLC: How the Dev Lifecycle Is Collapsing Into Continuous Flow

The traditional software development lifecycle was designed around human speed. Requirements take weeks because people need time to debate, draft, and revise. Code review takes days because engineers are context-switching between five other things. Testing is a phase at the end because running a full suite used to take hours and required dedicated QA time to interpret results.

AI doesn’t work at human speed. It drafts requirements in minutes. It reviews pull requests as they’re opened. It continuously generates and runs test suites. When you embed AI at that level, something structural changes: the phases that were separated by time start to overlap. The AI-augmented SDLC isn’t just a faster version of the old process. It’s a different shape.

The SDLC Was Never Designed for AI: And It Shows

Phase handoffs in the traditional SDLC weren’t a design philosophy. They were a concession to human limitations.

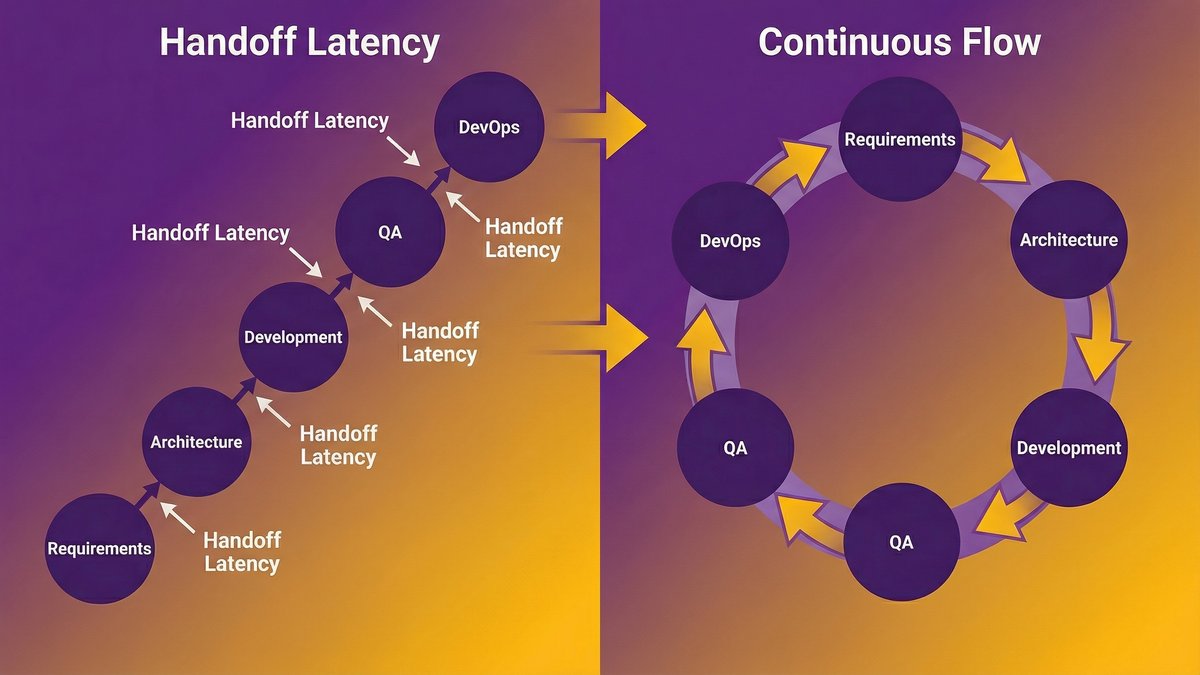

A requirements document gets written, then handed to architects, then handed to developers, then handed to QA, then handed to DevOps. Each handoff exists because each group needs time: to read, to process, to schedule, to act. The latency between phases isn’t inherent to building software. It’s inherent to coordinating humans across a sequential process.

AI removes most of that latency. An AI agent can read a spec file and scaffold a code structure in the time it takes a developer to brew coffee. A code review agent can flag issues as soon as a commit is pushed. A test generation tool can write unit tests alongside the code that triggers them. The “handoff” between requirements and development doesn’t need to be a gate anymore. It can be continuous.

That’s where most SDLC frameworks are breaking. They weren’t built for a world where the work between phases takes minutes. The process assumes days, sometimes weeks, between each stage. When you compress the work that justified those gaps, the gaps themselves become the bottleneck.

Phase handoffs were built for human latency, not machine speed

Consider the sprint review. It exists because human developers need a checkpoint: a moment to step back, assess what shipped, and plan what comes next. That rhythm makes sense when the work between checkpoints took two weeks of focused human effort.

Now consider what happens when AI agents can generate 60–70% of the code in that sprint, run test coverage automatically, and flag architectural drift against the spec file in real time. The two-week sprint isn’t accelerating the work. It’s imposing a human-paced structure onto a process that no longer operates at human pace.

Where the friction lives: requirements to code, code to review, review to deploy

The highest-friction handoffs in the traditional SDLC are exactly the ones AI handles best: translating ambiguous requirements into structured specs, checking code against those specs, and validating that tested code is safe to ship. These aren’t coordination problems between humans anymore. They’re tasks that AI tools now run continuously in the background.

The CTO who wants to capture that value doesn’t need better tools. They need a delivery model designed to run without the handoff gaps those tools were originally built to bridge.

What AI Is Actually Doing to Each SDLC Phase

AI isn’t transforming the SDLC as a whole. It’s compressing specific tasks within each phase, and the cumulative effect of that compression is restructuring the sequence.

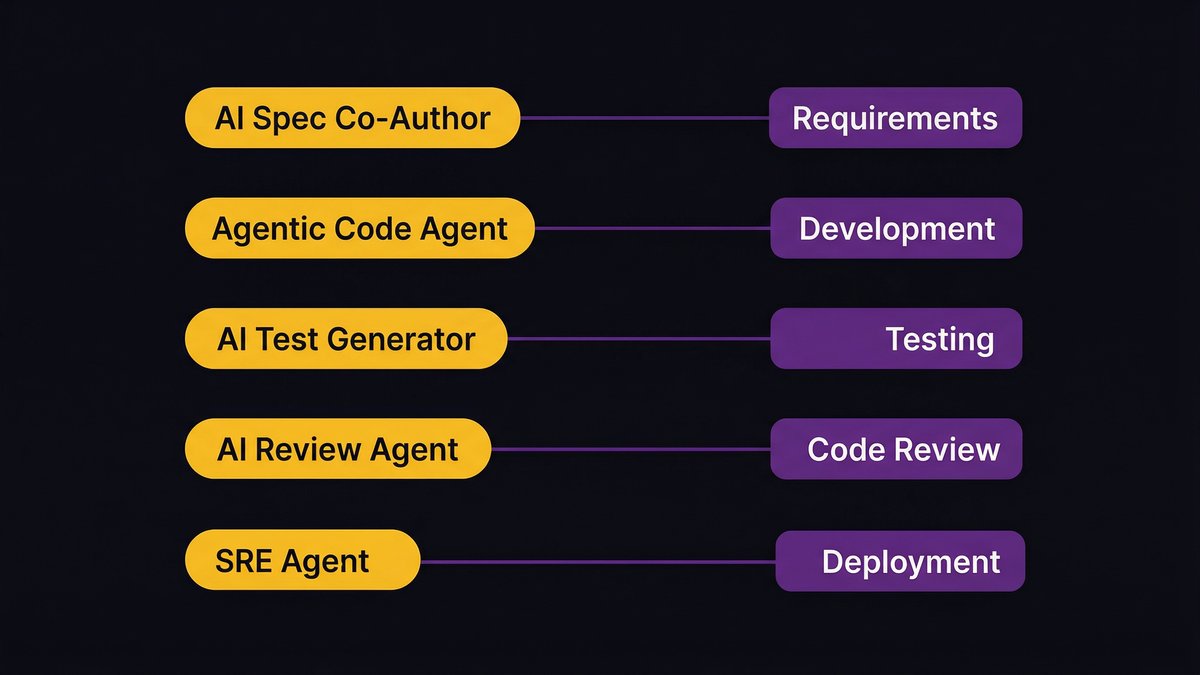

Requirements and planning: AI as spec co-author

The slowest part of requirements gathering has always been translation: turning a business problem described in operational language into a technical spec written in engineering language. AI bridges that gap directly.

Microsoft’s engineering teams now use spec-driven development where AI agents generate service blueprints and code scaffolds from requirements before a human developer opens a file. According to the Microsoft Azure blog (2026), the AI can produce a comprehensive requirements list, service blueprint, and initial code scaffold “before I’ve opened Notepad and started to decipher requirements.” That’s not a productivity gain within the old process. That’s the requirements phase and the initial development phase starting to merge.

Sprint planning follows the same pattern. AI-assisted story generation, effort estimation, and task breakdown reduce planning cycles from days to hours.

Development: from copilot assist to autonomous code agents

The copilot phase: AI suggesting completions while a developer types, is already giving way to agentic coding, where AI agents handle self-contained tasks with minimal human direction. According to Forrester (2026), agentic software development represents “the next phase of AI-driven engineering tools,” where AI agents can “plan, generate, modify, test, and explain software artifacts” end to end.

The implication for the SDLC isn’t faster typing. It’s that development is no longer a purely sequential process where one thing gets built before the next thing gets designed. Agentic systems can work across multiple components in parallel, within guardrails defined by the spec.

Testing and review: AI-generated test suites and agent-assisted code review

Testing is shifting from a post-development phase to a continuous practice. Nexa Devs builds AI-generated unit and integration tests alongside code delivery as a standard practice in every sprint, rather than as a separate end-phase. The effect is significant: according to the Qodo 2025 AI Code Quality Report, AI-assisted code reviews led to quality improvements in 81% of cases, compared to 55% with traditional review processes.

Code review is the same story. According to a 2026 Atlassian RovoDev study, 38.7% of comments left by AI agents in code reviews lead to additional code fixes. That’s not a reviewer being replaced. That’s the review cycle running faster and generating more actionable signals per pass.

Deployment and operations: agentic SRE and continuous observability

The final phase is moving in the same direction. SRE agents now handle proactive day-2 operations: monitoring for anomalies, analyzing logs for root cause, and surfacing triage information before a human is paged. Microsoft’s AI-led SDLC framework includes a dedicated “SRE Agent” step in its production model, framing it not as a support function but as a full phase of the delivery loop.

Deployment itself is increasingly deterministic and automated. The human judgment required at the gate between “tested code” and “deployed code” narrows when AI testing coverage is high, and deployment pipelines have tight validation gates.

Why Compressing Phases Eventually Eliminates the Handoffs Between Them

This is the structural argument most SDLC articles miss. Compressing work within phases is one thing. But the AI-augmented SDLC doesn’t just speed up each phase. It removes the reason the handoffs existed in the first place.

Wix CTO (Even-Haim Yaniv): Articulated that AI moves engineering focus from managing handover “handoffs” to AI-native, end-to-end ownership where frontend, backend, and mobile boundaries disappear.

The latency handoff: what takes time when AI handles the work

A handoff in the traditional SDLC has two components: the transfer of context (briefing the next team on what the previous team produced) and the wait time before the next team can act (scheduling, prioritization, capacity).

AI handles both. An agent consuming a spec file doesn’t need a briefing meeting. It reads the artifact directly. An agent that’s monitoring a CI pipeline doesn’t need to be scheduled. It acts when the trigger fires. The asynchronous, human-coordinated handoff collapses into a synchronous, machine-executed transition.

Stack Overflow losing 77% of new questions since 2022 isn’t just a statistic about AI coding tools gaining adoption. It signals that the “I don’t know how to do this next step” pause: the moment that used to generate a handoff, a Slack thread, or a Stack Overflow question, is disappearing from the development loop.

Continuous flow as an emergent property, not a process redesign

Here’s the important distinction: continuous flow in an AI-native SDLC isn’t a methodology you adopt. It’s what emerges when you remove the latency that made sequential phases necessary.

You don’t need to redesign your sprint structure. You need to embed AI deeply enough in each phase that the time between phases compresses on its own. When requirements and scaffolding happen in the same tool, when code and tests are written together, when deployment gates close automatically on quality signals, the “waterfall-inspired” sequence doesn’t need to be dismantled. It dissolves.

That’s a fundamentally different framing than “agile at scale” or “DevOps maturity.” It’s not about process redesign. It’s about what process structure survives when AI is doing the work that made each step take as long as it did.

The Productivity Gap: Why Most Teams Aren’t Seeing It

Here’s the uncomfortable reality: most teams have AI tools. Most aren’t seeing continuous flow.

According to research cited by ShiftMag (2026), 92.6% of developers now use AI coding assistants. Yet productivity gains across organizations remain around 10%. The tools are everywhere. The transformation isn’t.

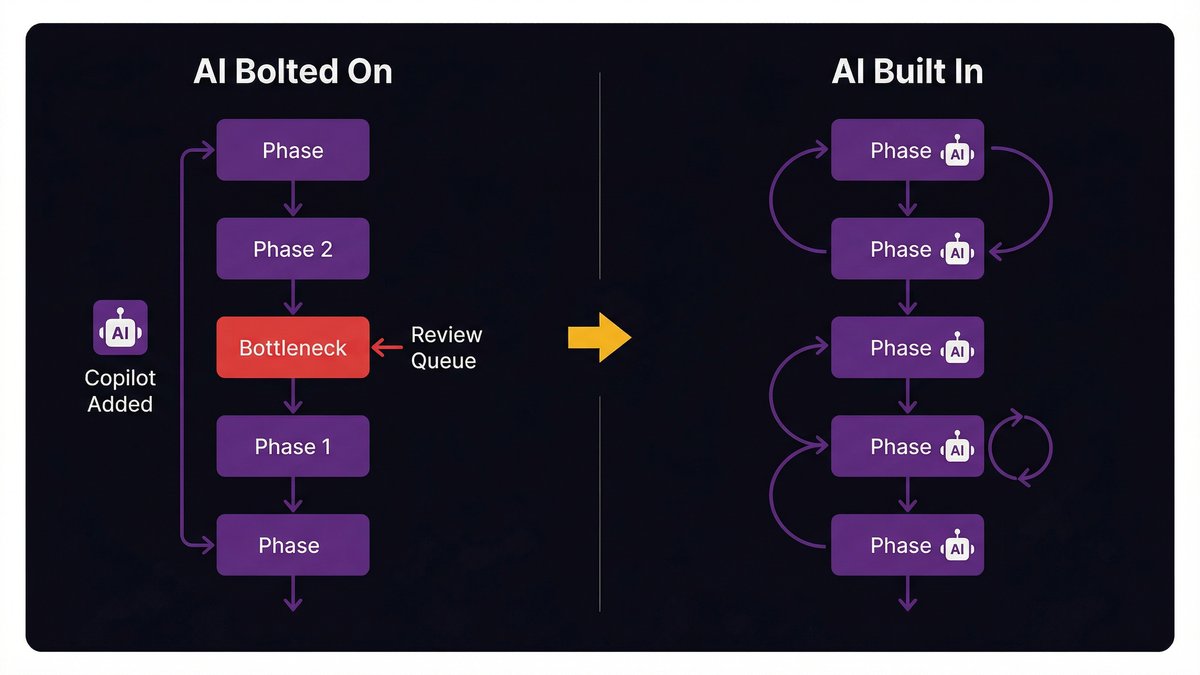

AI bolted on vs. AI built in: the architecture difference

Adding GitHub Copilot to a team that runs a traditional two-week sprint with a separate QA phase and a manual deployment process does not produce an AI-augmented SDLC. It produces a traditional SDLC with a faster typing speed. The phase gates are still there. The handoff latency is still there. The sequential bottlenecks are still there.

The distinction matters for CTOs because “we’ve adopted AI tools” is not the same claim as “our delivery model is designed around AI.” The first is a tool purchase. The second is an architectural decision about how software gets built, how teams are structured, and how phases relate to each other.

Why 93% AI adoption can still yield only 10% productivity gain

Consider a concrete example. A development team adopts an AI code generation tool. The developers write code faster. But the code still goes into a review queue managed by two senior engineers who are bottlenecked. The faster-written code waits in the same queue as before. The cycle time doesn’t improve because the bottleneck isn’t code generation. It’s review throughput.

This is what McKinsey means when their research finds AI can improve developer productivity by up to 45%: it requires the entire delivery model to be redesigned around AI tooling, not just the individual task layer. According to McKinsey (cited by Ciklum, 2026), that 45% figure assumes AI is structurally embedded in the delivery process. Add a copilot to a broken handoff structure, and you get a faster arrival at the same queue.

The teams capturing real gains aren’t the ones with the most AI tools. They’re the ones whose delivery model was designed with AI in every phase from the start.

What an AI-Native Delivery Model Actually Looks Like

This is what changes when AI is built in rather than bolted on.

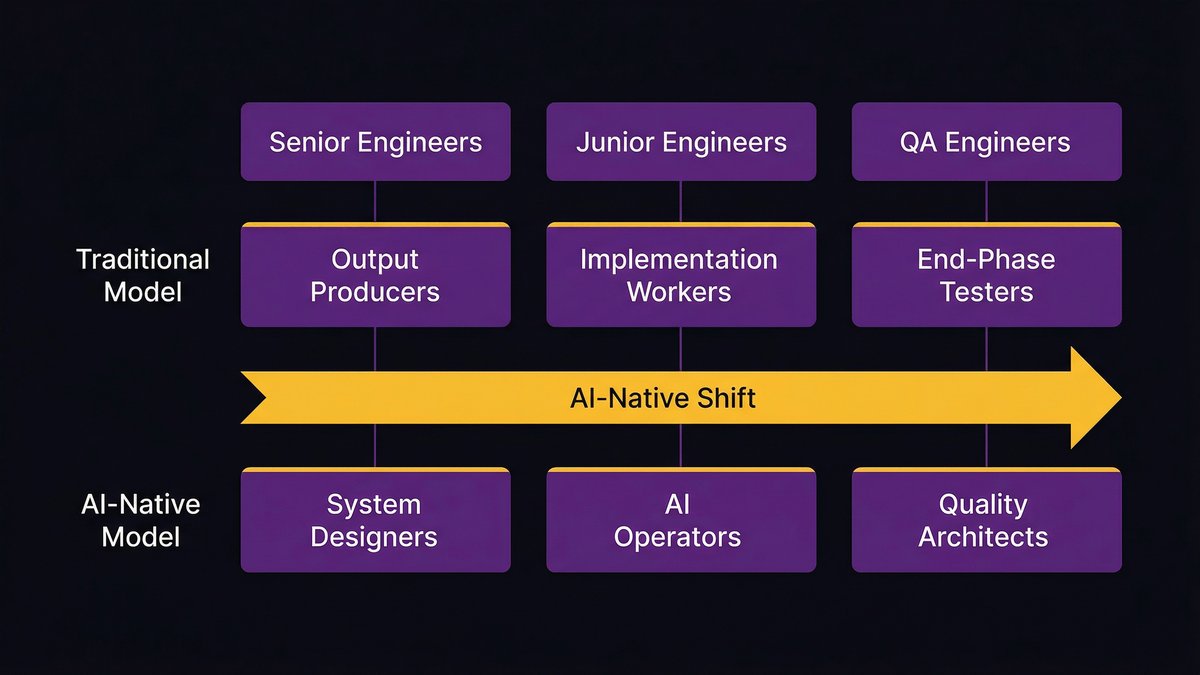

Team structure when phases collapse: roles, rituals, and sprint redesign

When AI handles spec translation, test generation, code review signals, and deployment validation, the engineering roles that existed to manage those handoffs change. Not disappear, but change.

Senior engineers shift from “output producers” to “system designers”: defining the spec files and architectural guardrails that AI agents work within. Junior engineers shift from “implementation workers” to “AI operators”: directing agents, reviewing outputs, and catching the cases where the agent misreads the intent. QA engineers become quality architects: designing the testing strategy and coverage criteria against which the AI-generated tests are built.

The sprint doesn’t disappear either. But it’s no longer structured around “what gets built this sprint.” It’s structured around “what gets verified and shipped this sprint.” The work that used to take most of the sprint happens in hours. The sprint time that remains goes to integration, validation, and the genuinely hard architectural decisions that AI can’t resolve.

The toolchain layer: what gets embedded at each phase vs. what stays human

Not every task in the SDLC is a good AI candidate. The ones that are: well-defined, pattern-based, repetitive, and tolerance-sensitive (code generation, test writing, deployment validation, log analysis). The ones that aren’t: novel architectural decisions, ambiguous product requirements that need stakeholder negotiation, security threat modeling that requires context AI doesn’t have.

The practical question for a CTO isn’t “which AI tools should we buy?” It’s “which specific tasks in our delivery model are AI-executable, and how do we restructure the process so those tasks run as background jobs rather than as sequential handoffs?”

Nexa Devs’ delivery model is built around this distinction. Every sprint phase has AI tooling embedded at the task level. The human work is explicitly structured around the decisions AI can’t make. The result is a delivery model where AI handles what AI handles well, and engineers handle what engineers handle well, with no process overhead bridging the two.

Running Modernization and AI Embedding in Parallel

The CTO reading this might be thinking: “Our stack isn’t ready for this. We’ve got legacy systems, undocumented services, and a monolith we’ve been meaning to decompose for three years.”

That’s not a blocker. It’s the starting point.

Why you don’t need a fully modernized stack to start

According to MIT’s State of AI in Business 2025, 95% of enterprise AI pilots fail to produce measurable ROI. The most common explanation is that organizations try to finish their modernization work before embedding AI, treating them as sequential initiatives. Modernize first, then add AI.

That sequence is wrong. The modernization work is slow, expensive, and produces business value only at the end. Embedding AI in the phases that are already running, right now, produces value immediately while the modernization work happens in parallel. You don’t need a clean microservices architecture to get AI-assisted code review on the services you’re building today. You don’t need a fully documented legacy system to use AI-generated tests on new feature development.

The incremental embedding approach: phase-by-phase AI activation

Start with the phase that has the clearest, most self-contained AI application in your current process. For most teams, that’s code review or test generation. Embed AI tooling at that specific task. Measure the cycle time before and after. Then move to the next phase.

Each phase you embed AI in produces two benefits: direct cycle time improvement in that phase, and reduced handoff latency to the next phase. The compound effect builds faster than most teams expect, because the gains aren’t linear. Faster code review reduces the time tests wait to run. Faster test runs reduce the time before deployment validation. Deployment validation runs automatically, removing a gate entirely.

The parallel modernization track feeds this process too. As legacy services get decomposed and documented, they become available for AI tooling that legacy code made impossible. Modernization and AI embedding aren’t competing priorities. They’re the same priority with two execution paths.

The Organizational Design Question CTOs Are Avoiding

The hardest part of the AI-augmented SDLC isn’t technical. It’s organizational.

When phases collapse, what happens to phase-based team ownership?

Most engineering organizations are structured around phases. There’s a QA team. There’s a DevOps team. There’s an architecture review board. These structures made sense when phases were discrete and each required specialized human judgment at a specific point in the sequence.

When AI compresses those phases and the handoffs between them shrink, the phase-based team structure starts to create friction rather than reduce it. The QA team is still reviewing tests that AI generated three days ago. The architecture board is still scheduling reviews for decisions that AI tools flagged during development. The DevOps team is still managing deployment gates that automated pipeline validation is perfectly capable of closing.

Reorganizing around this is politically difficult. Engineers whose roles were defined by phase ownership will push back. Senior engineers who built their authority on being the human gateway between phases will feel that authority threatened. A CTO who wants to capture the real gains of an AI-native SDLC has to navigate that organizational reality, not just the technical one.

Take a position on this: the teams that restructure their organizations around AI-native delivery will outperform the teams that add AI tools to phase-based structures. The performance gap between those two cohorts will widen every year.

Governance, accountability, and human-in-the-loop in a continuous flow model

Continuous flow doesn’t mean no checkpoints. It means checkpoints happen faster and are based on automated signals rather than scheduled reviews. The governance question isn’t “where do humans stay in the loop?” It’s “what signals should trigger human review, and how quickly can humans act on them?”

PwC’s Responsible AI in the SDLC framework (2026) frames this well: governance in an agentic SDLC requires “human review checkpoints, automated testing in CI/CD pipelines, and documented decision-making processes.” The checkpoints exist. They’re just triggered by system signals rather than calendar events. That’s a meaningful governance upgrade for most teams, not a governance risk.

The accountability structure changes, too. When AI is generating code and writing tests, the engineer who defined the spec and the architectural guardrails is more accountable for the quality of what ships than the engineer who typed the most lines. That accountability shift needs to be explicit in how teams are evaluated and how work is recognized.

Nexa Devs builds software delivery teams around AI-native workflows, not the other way around. If you’re evaluating whether your current delivery model can capture the gains of an AI-augmented SDLC, talk to our team about your specific stack and delivery structure.

What is AI-augmented SDLC?

The AI-augmented SDLC embeds AI tooling into every phase of software delivery: requirements, development, testing, review, and deployment. Unlike adding a code completion tool to an existing workflow, the AI-augmented SDLC redesigns how phases relate to each other so that AI handles the tasks that create handoff latency between them.

What is the AI process in SDLC?

In an AI-augmented SDLC, AI operates at the task level within each phase. During requirements, AI helps translate business language into technical specs. During development, AI agents generate, review, and test code. During deployment, AI validates quality signals and manages pipeline gates. The human role shifts to defining guardrails, making architectural decisions, and reviewing AI output on complex or novel problems.

What is agentic software development?

Agentic software development uses autonomous AI agents that can plan, write, test, and modify code with minimal human intervention on well-defined tasks. Unlike copilot tools that assist while a human drives, agentic tools take a high-level instruction and execute it across multiple steps. They’re most effective when the scope is bounded, and the success criteria are clear.

Is AI writing 90% of code?

Not yet at most organizations. Around 41% of all code written is currently AI-generated. The 90% figure comes from Dario Amodei’s projection, which hasn’t materialized on that timeline. What matters for CTOs is whether the delivery model is structured to make AI output reviewable, testable, and architecturally sound.